Introduction

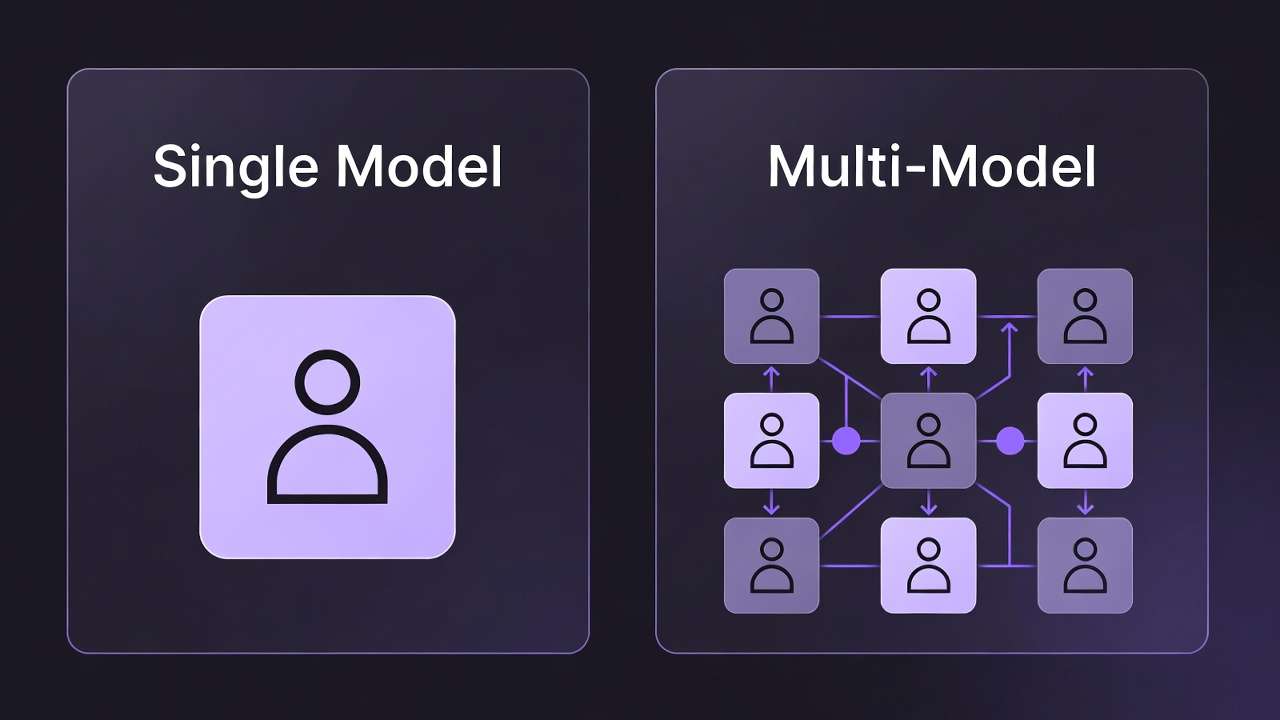

Creators chasing the allure of endless AI model variety often end up trapped in a cycle of endless experimentation, where the promise of "47+ models at your fingertips" dissolves into fragmented sessions of reformatting prompts and juggling incompatible outputs-yet contrarian evidence from real workflows suggests that mastering a single model's quirks can deliver far more reliable results for targeted projects than scattering efforts across a dozen providers. Platforms that aggregate models from sources like Google Veo 3.1, OpenAI Sora 2, and Kling introduce a unified front, but dedicated single-model environments - even a free ai pic generator - such as those optimized for Midjourney or Runway video edits, enforce stylistic discipline without the temptation of perpetual switching.

This chase for quantity over quality permeates creator discussions, but patterns from documented user experiences paint a sharper picture: Single-model setups hone in on provider-tuned prompts and controls, fostering predictable refinements for tasks like consistent image stylization or targeted video extensions. Multi-model aggregators, meanwhile, consolidate access to extensive lineups-including VideoGen options like Veo 3.1 Quality, Sora 2, and Kling 2.5 Turbo; ImageGen with Flux 2, Imagen 4, and Midjourney; plus ai video editing software via Runway Aleph or Luma Modify-facilitating fluid shifts at the cost of navigating diverse queue behaviors and resource patterns.

The timing for this evaluation couldn't be more pressing amid the barrage of updates: Google DeepMind's Veo iterations, OpenAI's Sora variants, Kuaishou's Kling advancements-all splintering the landscape into isolated specialists or comprehensive hubs. Daily dilemmas confront creators: Commit to one tool's reliability for domain-specific deliverables, or embrace a centralized hub for broad experimentation across providers? Observations indicate freelancers gravitating to single-model precision for client-facing consistency, while agencies embrace multi-model versatility to tackle varied project scopes. Solutions like Cliprise represent this aggregation trend, offering a model index with 26 landing pages categorized for specs review and direct workflow launches.

The consequences of missteps loom large: Context-switching exhaustion from disparate logins, prompt overhauls, and control relearning stretches asset production from efficient minutes into protracted hours. This guide lays out foundational prerequisites like reliable connectivity and prompt basics, breaks down workflows for each paradigm, uncovers pitfalls including prompt transfer challenges, and outlines a methodical selection framework. It delivers granular contrasts tailored to freelancers prototyping social assets versus agencies managing brand diversity, alongside sequencing advice such as image prototyping preceding video builds, plus niche scenarios where compromises persist.

Delving deeper, it explores optimization tactics spanning platforms-like blending single-model refinement with multi-model workflow strategies that combine specialized tools for maximum efficiency-and rising trends such as shared resource systems that minimize billing hurdles. For example, platforms like Cliprise allow seamless pairing of ElevenLabs TTS with video generators under one interface, sidestepping layered subscriptions. Grasping these mechanics empowers precise need audits-image-dominant pipelines or video-heavy production chains?-and tracking indicators like output stability through seed parameters where available. Overlook them, and ill-suited tools exacerbate flaws, such as inconsistent video renders from matching prompts. Horizon scans reveal intensifying integrations, arming creators for locked-down enterprise flows. Grounded in verified model traits, user archetypes, and platform architectures, this dispassionate breakdown clarifies when single-model specialization outperforms multi-model expanse, and when the reverse holds true-challenging the default bias toward accumulation.

Core Explanation

Prerequisites for Evaluating Platforms

Accurate platform assessments demand foundational readiness to sideline superficial impressions. Dependable internet connectivity is essential for enduring generation queues, particularly with models like Veo 3.1 or Kling 2.5 Turbo where processing durations fluctuate with system load. Develop representative prompts aligned to objectives-for instance, "a cyberpunk cityscape at dusk with cinematic lighting in 16:9 aspect ratio"-and validate them across relevant categories. Leverage available introductory access levels, recognizing they typically impose usage boundaries or reserve advanced models.

Prompt proficiency forms the bedrock: Grasp components such as negative prompts to sidestep unwanted elements, CFG scale to modulate prompt adherence, and seeds for output reproducibility in supporting models. Dedicate 30-45 minutes per platform to execute 3-5 generations, capturing results in a dedicated app with screenshots, timestamps, and observations on features like duration selections (5s, 10s, or 15s where offered). Essential gear encompasses a web browser for platform entry, viewers for inspecting upscales, and timers to benchmark process stages. Novices invest additionally in syntax acclimation; seasoned users prioritize refinement velocity. Such preparation uncovers subtleties promptly, including streamlined interfaces that curtail repeated authentication efforts.

What Single-Model Platforms Offer: Core Workflows

Single-model platforms revolve around a singular provider's domain, fine-tuning interfaces to that engine's idiosyncrasies. Initiate with model selection, such as Midjourney for artistic imagery or Runway for video manipulation-no catalog navigation required, as designs presume dedication to its core competencies, evident in Midjourney's Discord-centric prompting or Runway's timeline manipulations.

Proceed to prompt entry via bespoke syntax: Midjourney thrives on descriptors like "--ar 16:9 --v 6," whereas Imagen 4 leans toward conversational phrasing with aspect adjustments. Controls integrate seamlessly: Seeds for precise replication, negative prompts embedded, CFG for stylistic precision. The payoff lies in uniformity-identical inputs produce thematically aligned series, perfect for branded materials.

Generation and refinement unfold within inherent boundaries: Downloads occur natively; enhancements like upscales or extensions leverage onboard utilities, such as Runway Aleph's alteration sequences. Users observe refined dependability: A Flux 2 prompt in its origin environment iterates more fluidly than transplanted versions. Verified applications encompass thumbnail stylization, enabling dozens of variants in compact sessions after initial familiarization. For videos, Sora 2 in isolation permits granular duration adjustments (Standard versus Pro variants), tempering variability.

From novice perspectives, syntax onboarding intensifies initially but streamlines upon proficiency-one interface, one evolving prompt repository. Intermediates exploit remix capabilities, like Midjourney's regional variations. Advanced practitioners preview API integrations for volume handling. Limitation: Compartmentalized reach necessitates separate access for adjuncts like voice synthesis via ElevenLabs or upscaling through Topaz. Nonetheless, for precision demands like character uniformity with Ideogram V3, this paradigm excels by narrowing training data discrepancies.

What Multi-Model Platforms Provide: Unified Access

Multi-model platforms consolidate diverse providers into a singular dashboard, redirecting emphasis from configuration to curation. Commence by navigating a model directory, frequently featuring 26+ categorized landing pages-VideoGen encompassing Veo 3.1 Fast/Quality, Sora 2 variants, Wan 2.5; ImageGen including Flux 2 Pro/Flex, Google Imagen 4 Standard/Fast/Ultra, Seedream 4.0; VideoEdit with Luma Modify, Topaz Upscaler; ImageEdit via Qwen Edit, Recraft Remove BG; Voice through ElevenLabs TTS.

Instances like Cliprise structure this for efficient perusal of specifications, applications, and parameters (prompt text, aspect ratio, duration options, seed for reproducibility, negative prompts, CFG scale). Advance to activation within a cohesive workspace-selecting "Launch" transitions to a persistent canvas, retaining elements across selections.

Midstream pivots become routine, such as progressing from a Flux 2 image to Kling 2.5 Turbo video elaboration. Findings: Configuration condenses markedly relative to tab proliferation. In Cliprise's workflow, for example, generate an Imagen 4 foundation, enhance via Grok upscale, layer ElevenLabs audio-all within continuous flow. Efficacy stems from consolidated resource tracking, obviating provider-by-provider invoicing.

User lenses diverge: Newcomers value organizational hierarchies to avert saturation. Practitioners juxtapose Kling against Sora 2 variants directly, discerning unified progression management. Specialists orchestrate cascades, like Nano Banana visuals feeding Hailuo 02 animations. Illustrations: Freelancers sketch product visuals with Midjourney, elaborate through ByteDance Omni Human. Enterprises align to specifications-photorealism through Veo 3.1 Quality, abstraction via Flux Kontext Pro. For detailed model comparisons and prompting, see our Kling 3.0 vs Sora 2, Kling 3.0 vs Veo 3, Sora 2 prompts, and Veo 3 prompts guides. For the architectural case for multiple models on one platform, see Multiple AI Models One Platform: Why It Matters.

Conceptual frame: Single-model equates to a specialized atelier (profound implements, constrained domain); multi-model to a versatile kit (expansiveness, communal ergonomics). Practically, multi-model platforms reduce cross-login time significantly in reported sessions, although model-specific prompt adjustments endure. Tools like Cliprise facilitate via dynamic model retrieval and toggles. For audiovisual harmony, ElevenLabs slots organically after core generation.

This framework bolsters blended exploration: Commence expansive (scan 47+ options), converge focused (refine singularly). Tangible instance: Social carousel assembly-Seedream 4.5 image series, Runway Gen4 Turbo segments, cohesive output. Alternative: Logo ideation through dedicated generators, polish in layered editors (masking, filters). Profundity underscores multi-model aptitude for reconnaissance, single-model for fulfillment-each offsetting supplier shortcomings, such as Kling's kinetic prowess complementing Sora's storytelling emphasis.

What Most Creators Get Wrong About Single vs Multi-Model Platforms

Misconception 1: Multi-Model Platforms Always Accelerate Generation

Assumptions that aggregating 47+ models inherently hastens all processes frequently overlook queue variations. Single-model environments like Midjourney advance image tasks more directly post-initial wait, concentrating capacity on one lineage. Multi-model setups harmonize progression but pool traffic-Veo 3.1 Quality might extend moderately during surges. The shortfall arises when users enqueue Kling 2.6 alongside Wan 2.6 concurrently, accelerating resource thresholds. Illustration: Freelancer assembling image sets; single-model completes batches more straightforwardly, multi-model extends due to collective demands. Seasoned operators target quieter periods; novices saturate, prolonging phases. Platforms like Cliprise address via integrated oversight, yet discrepancies remain.

Misconception 2: Prompts Port Effortlessly Across Platforms

Portability appears intuitive, yet syntactic disparities undermine. Single-model refines to indigenous dialects-like Midjourney's --stylize versus Flux 2's CFG scale. Multi-model necessitates recalibration: Negative prompts function robustly in Veo 3 but dilute in Hailuo 02. Case: "No blur, sharp edges" thrives in Imagen 4 Ultra, obscures in Sora 2 Turbo. Rationale: Divergent conditioning-providers interpret CFG spans (typically 1-20) variably for fidelity. Freelancer transplants Discord phrasing to Cliprise's canvas, forfeits partial sharpness, iterates repeatedly. Practitioners catalog model particulars; beginners paste indiscriminately. In environments like Cliprise, inter-model documentation assists, though subtlety lies in seed consistency (reliable in Veo/Sora, variable elsewhere).

Misconception 3: Multi-Model Eliminates Credit/Queue Mental Overhead

Expectations of unified resources nullifying oversight ignore cost disparities and cognitive demands. Single-model charges straightforwardly per output, foreseeable for immersion. Multi-model fluctuates-modest for Flux Pro imagery, substantial for Kling Master video-compelling advance verification. Illustration: Enterprise exploration; transitions Sora 2 Standard to Veo 3.1 Fast, misjudges accumulation (introductory access curtails parallelism). Outcome: Interrupted sequences, deliberation strain. Undervalued because guides omit tracking-maintaining tallies over 26 categories. Cliprise-style unified displays mitigate, but divergences (e.g., Topaz 8K upscales resource-intensive) catch off-guard. Specialists sequence economical first; novices encounter barriers midstream.

Misconception 4: Reproducibility Matches Across Both

Seeds intend replication, but implementations diverge. Single-model perfects seed+prompt pairings for fidelity (Ideogram Character steadfast). Multi-model fluctuates-Grok Video accommodates moderately, Runway Gen4 Turbo patchily. Example: Identical seed in Flux 2 Pro duplicates imagery closely; shift to Qwen Edit deviates noticeably. Cause: Incomplete support-robust in Veo 3, Sora 2; inconsistent others. Independent creator duplicates client visualization; multi-model prompts adjustments. Platforms like Cliprise highlight in descriptions, but oversight leads to pursuing irreproducible variants. Distinction: Video incorporates motion unpredictability, widening chasms. Specialists validate seeds beforehand; this conserves substantial iteration in observed instances.

These pitfalls originate from polished demonstrations glossing disparities. Authentic remedy: Chronicle model idiosyncrasies, accepting compromises-single-model for steadiness, multi-model for revelation.

Real-World Implementation & Comparisons

Archetypes dictate preferences sharply. Freelancers incline single-model for repeatable precision like thumbnail series (Midjourney cohorts), prizing rapid post-setup cycles. Agencies pursue multi-model for scope diversity-juxtapose Veo 3.1 realism against Kling 2.5 kinetics in unified sittings. Independents blend, ideating expansively then honing narrowly.

For delineation, the ensuing table appraises pivotal dimensions via workflow observations and model specifics:

| Criteria | Single-Model Platforms (e.g., Midjourney, Runway) | Multi-Model Platforms (e.g., Cliprise aggregation) | Hybrid Approach (Single + Multi) |

|---|---|---|---|

| Model Variety | Restricted to one provider's lineup (e.g., Sora Standard/Pro variants, Runway Gen4 Turbo); optimized for depth in images/videos like Midjourney stylization | 47+ models spanning providers (e.g. Veo 3.1 Fast 108 / Quality 720 per video, Sora 2 54-63 per clip, Flux 2 tiered 9-44 credits); spans VideoGen to ImageEdit categories | Merges 1-2 single-model specialists with multi breadth; effectively accesses 20+ models for ideation-to-execution pipelines |

| Workflow Switching Time | Absent internally; 30-60s to alternate tools via new logins/prompt transfers; streamlined for native chains | Quick model transitions via index navigation and launch; maintains canvas continuity for sequences like Flux 2 image to Hailuo 02 extension | 15-30s per pivot; incorporates export/import steps but diminishes full re-authentications in linked processes |

| Prompt Reuse | Provider syntax exclusive (e.g., Midjourney --ar 16:9); superior fidelity internally, moderate portability externally | Adaptable across models (universal seed/CFG/negative prompts where supported); aided by specs docs for tweaks like Veo 3 to Kling 2.5 Turbo | 60-80% retention; custom templates span gaps, e.g., Imagen 4 negatives to Qwen Edit refinements |

| Queue Handling | Provider-dedicated (typical for focused volumes); consistent for modest scales, variable at peaks | Consolidated queue across 26+ categories; incorporates monitoring for ongoing jobs like Veo 3.1 or Wan 2.5 | Flexible; multi-model for exploratory queues, single for finalization-optimizes waits in staged workflows |

| Output Control | Intensive native options (duration 5s/10s/15s, full aspect ratios); seed dependable in models like Ideogram V3 | Extensive parameters (prompt/aspect/seed/negative/CFG); model-dependent (reliable in Veo 3/Sora 2, variable in Grok Video) | Optimized blend; single-model specifics (Runway Aleph edits), multi-model variety testing |

| Scalability | Apt for batch processing in moderate volumes; API essential for elevated demands | Accommodates broader scopes via unified resources; adapts with plan capabilities for models like Topaz 8K at 73 credits | Optimal for substantial outputs; multi-model ideation (initial tests), single-model production batches |

The table underscores multi-model strengths in expanse demanding adjustments, contrasted with single-model niche velocity. Notable observation: Hybrid strategies frequently enhance workflow efficiency in documented scenarios, feeding exploratory outputs into refined executions.

Illustration 1: Freelancer assembling social reels. Single-model (Kling native): Facilitates batches of short clips emphasizing consistent motion. Multi-model (Cliprise-style): Compares Kling against Wan 2.5, chooses optimal, incorporates ElevenLabs TTS-prolongs duration yet amplifies selections. Hybrid: Multi-model for initial prototypes, single-model for final honing.

Illustration 2: Agency product rollout. Multi-model distinguishes: Imagen 4 visuals at 15 credits, upscale via Grok/Topaz 2K-8K (37/73 credits), video through Sora 2 Pro-complete pipeline in integrated flow. Single-model confines to partial scopes. Hybrid: Single Midjourney for styling, multi-model for elaboration.

Illustration 3: Independent YouTube thumbnail-to-intro sequence. Image initiation in Flux 2 (multi-model), elaboration via Runway Gen4 Turbo-compact process. Single-model video onset burdens static prerequisites. Forum dialogues suggest freelancers frequently opt single-model for uniformity, agencies multi-model for adaptability. Utilizing Cliprise, operators note fluid pivots, such as Recraft BG removal to Ideogram V3 characters.

Further: Audio-augmented promotions-ElevenLabs TTS following video in multi-model, unavailable in image-centric singles. Tendencies disclose freelancers evaluate throughput initially; constrained suits single-model, expansive favors multi-model. Cliprise setups with model toggles support scalability evaluations.

Why Order Matters: Image-First vs Video-First

Creators commonly initiate with video generation, encumbering pipelines with elevated-variance, resource-heavy commencing steps. Incorrect because video engines like Sora 2 or Veo 3.1 necessitate meticulous motion phrasing, where initial lapses propagate-revising 10s segments demands substantial per-instance investment, contrasting image's brisker cycles. Observed sessions indicate elevated abandonment when video leads due to inconsistencies, as unproven statics precipitate conceptual shortfalls. Novices pursue striking dynamics; experts validate compositions preliminarily.

Cognitive burden escalates: Alternating between flawed video assessments and phrasing revisions heightens weariness. Single-model video waits compound; multi-model unifies yet monitors spans (image upscale to extension). Reported: Supplementary duration devoted to variance documentation and choices. Platforms like Cliprise sustain image-to-video references, diminishing re-ingests substantially, though suboptimal sequencing magnifies CFG/seed variances.

Adopt image → video for prototyping: Flux 2 foundation, Topaz upscale, Kling 2.5 Turbo extension-typically swifter than video origination owing to processing disparities. Invert for motion-centric (TikTok routines: Hailuo 02 onset, frame extractions). Rationale: Statics assay layout economically; dynamics layer atop affirmed visuals. Freelancers note time efficiencies; agencies expedite alignments.

Workflow tendencies: Image-leading exhibits superior consistency transfer where seeds apply, video-leading trails amid motion shifts. Tools like Cliprise enable through categorizations-ImageGen feeds VideoGen. Specialists order: Image → edit (Qwen) → upscale → video → voice. Sequenced methods demonstrate reduced regenerations.

When Single or Multi-Model Platforms Don't Help

Boundary scenario 1: Hyper-specialized aesthetics bound to exclusive datasets. Niche creators pursuing precise historical renditions encounter voids-engines like Ideogram V3 or Flux Kontext Pro generalize, bypassing obscure corpora. Single-model offers marginal edge; multi-model disperses trials fruitlessly. Case: Portrait specialist requires 18th-century oils; extensive runs across 47+ approximate, consuming extensive durations. Failure root: Absent dataset dominion; bespoke tunings unavailable.

Boundary scenario 2: Elevated-throughput production confronting progression barriers. Enterprises generating hundreds weekly confront parallelism constraints-introductory access restricts more than advanced tiers. Multi-model consolidates yet saturates; single-model provider-scales inadequately sans enterprise access. Example: E-commerce directory; postponements propagate, timelines falter. Cliprise-like with oversight manages intermediate, yet scale unveils boundaries.

Boundary scenario 3: Novices daunted by selections. 26+ categories immobilize-Veo versus Kling absent specs prompts haphazard choices, subpar yields. Single-model streamlines yet compartmentalizes competencies.

Exceptions: Domain artists favoring traditional implements, sporadic hobbyists evading resources, facilities with proprietary engines. Candid constraints: Irreproducible yields (non-seed engines), progression fluctuations, phrasing enforcements obstruct intricate visions. Unresolved: Algorithmic dominion, data opacity. Multi-model aids probing, single-model immersion-but neither remedies foundational voids or instantaneous expansion.

Industry Patterns and Future Directions

Uptake trajectories favor aggregators: Platforms with 47+ models like Cliprise appear in expanding creator repertoires, surpassing isolated instruments. Motivation: Centralized navigation-directory scan, activation, pivot-resonates with freelancers per discussions. Single-model retains traction for depth requisites (Runway manipulations).

Evolutions in motion: Enhanced linkages, e.g., Flux-to-Luma Modify image-video. ElevenLabs expansions for audiovisual. Consolidated resources normalize, contracting multi-authentications to singular.

Over 6-12 months: Enterprise access, white-label provisions. Video prolongations advance (Wan Speech2Video), 8K upscales standardize. Preparation: Proficiency in universals-seeds, CFG-hybrid trials. Creators adjust via audits: Image/video proportions? Cliprise previews via structured categories.

Related Articles

- All AI Models in One Subscription: End Tool Chaos

- Multiple AI Models One Platform: Why It Matters in 2026

- Multi-Model AI Platforms: Why Creators Are Ditching Single-Tool Subscriptions

- AI Content Creation: The Complete Guide 2026

- The Shift to Multi-Model AI Platforms: Industry Trends 2027

- Migrating from Single-Model to Multi-Model Workflows

- Why Single-Model Platforms Quietly Limit Creative Output

- Multi-Model Workflows on Cliprise

- What Is a Multi-Model AI Creative Workflow

- AI Model Comparison: 47 Models Instant

- AI Video Generator →

- AI Image Generator →

- AI Art Generator →

Cliprise vs. Specific Platforms

- Cliprise vs. Canva AI →

- Cliprise vs. Adobe Firefly →

- Cliprise vs. Kling AI →

- Cliprise vs. Leonardo AI →

- Cliprise vs. OpenAI →

- Cliprise vs. Pika →

Alternatives and Platform Comparisons

- Best Multi-Model AI Platform 2026 →

- Canva AI Alternative →

- Ideogram Alternative 2026 →

- Kling AI Alternative 2026 →

- Luma AI Alternative 2026 →

- Pika Alternative 2026 →

- Runway Alternative 2026 →

- Runway Alternative: Best Options →

- Why Creators Are Switching from Runway →

Platform Structure and Decisions

Conclusion

Pivotal choices pivot on requisites: Single-model for steadfast immersion (Midjourney cohorts), multi-model for amplitude (47+ explorations), hybrid for equilibrium. Fallacies like flawless portability dissipate via chronicling; sequencing (image-precedent) conserves phases. Table delineates compromises-transition ease versus command granularity.

Forward: Assay prompts over 3 models, order image→video, monitor seeds. Mitigate progressions during lulls.

Platforms like Cliprise furnish multi-model naturally, e.g., Flux-to-Kling cascades. Progressing imperatives privilege versatile operators navigating disparities.