Part of the multi-model strategy series. For the full platform comparison, see Single vs Multi-Model Platforms: Complete Guide. Ready to switch? Read the Migration Guide.

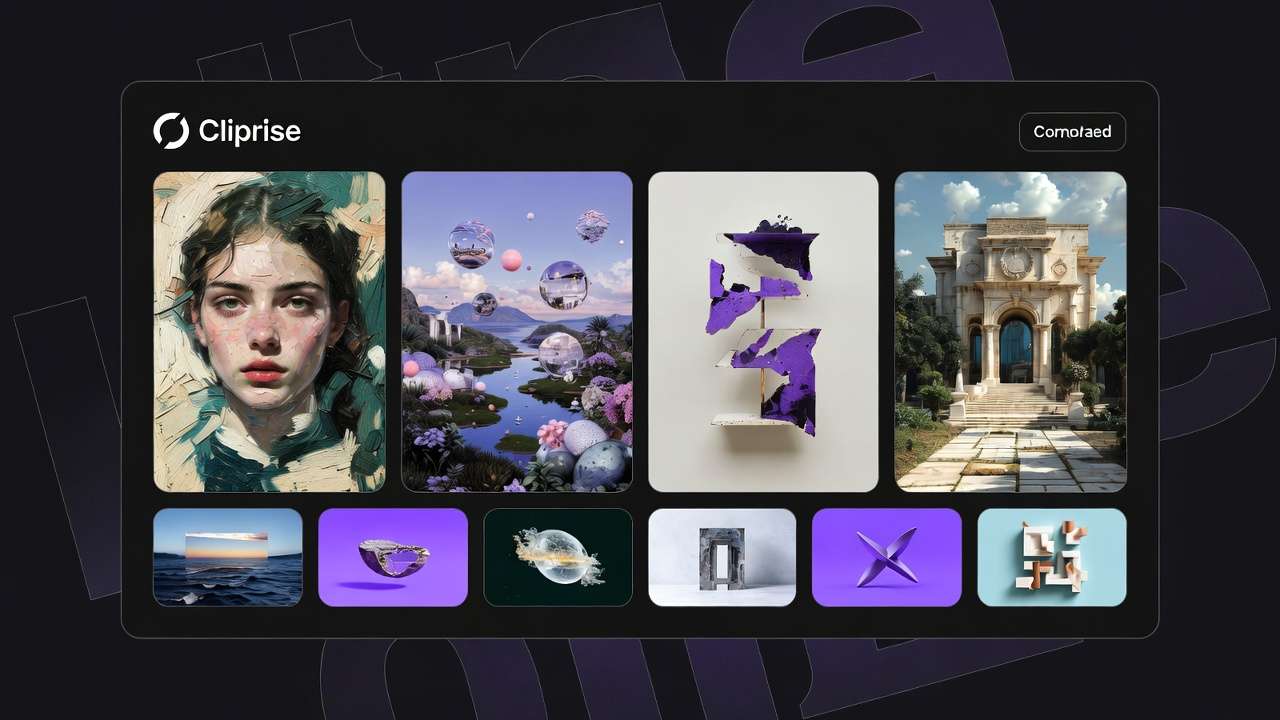

Exclusive reliance on single AI generation engines creates output diversity constraints that manifest as perceived skill limitations rather than recognized architectural boundaries. Universal application of a specialized ai art generator-Midjourney for all visual needs, Sora across video requirements-forces mismatched task-model pairings that complementary engine strategies resolve systematically.

The "master one tool" philosophy delivers short-term consistency benefits yet creates long-term scaling constraints. Documented creator workflows reveal measurably higher project completion rates and output variety when strategically deploying specialized models per task requirement versus forcing universal single-tool application across mismatched needs.

This analysis examines hidden costs of single-model dependency, contrasts output diversity patterns between single versus multi-model approaches, and establishes frameworks identifying when model expansion genuinely improves workflows versus when focus remains superior.

Hidden Constraint 1: Training Data Specialization Limits

Single-Model Reality: Every AI model trains on specific datasets optimizing particular output characteristics. Midjourney emphasizes artistic interpretation; Flux prioritizes photorealism; Sora focuses narrative coherence; Kling optimizes social motion energy.

Constraint Manifestation:

- Prompts succeeding in one model fail in another despite identical language

- Artistic models struggle with commercial photorealism requirements

- Narrative-focused video models underperform on high-energy social content

- Physics-heavy requirements exceed training data scope of generalist models

Documented Impact Patterns:

- Prompt success rate variance: 40-70% across models for identical requirements

- Creative direction shifts: Accepting model limitations rather than exploring alternatives

- Output homogenization: All work exhibiting single-model stylistic signature

- Abandoned concepts: Ideas failing single-model capability dismissed entirely

Multi-Model Resolution:

- Commercial photography: Flux 2 photorealism versus Midjourney artistic interpretation

- Video energy levels: Kling social optimization versus Sora narrative focus

- Motion types: Hailuo physics simulation versus ByteDance character animation

- Style requirements: Model selection matching project aesthetic needs precisely

Measurement: Test identical prompt across 3 complementary models. Single-model users accept first result; multi-model users select optimal interpretation from comparative options.

Hidden Constraint 2: Format and Technical Specification Limitations

Single-Model Reality: Models optimize specific technical parameters-aspect ratios, durations, resolution ranges-based on training priorities and architectural decisions.

Constraint Categories:

| Limitation Type | Single-Model Impact | Multi-Model Solution |

|---|---|---|

| Aspect Ratio Support | Limited native options forcing post-generation cropping artifacts | Models specialized per platform format (9:16 social, 16:9 professional, 1:1 feed) |

| Duration Options | Fixed ranges (e.g., 5s/10s only) constraining content planning | Complementary models filling duration gaps systematically |

| Resolution Capabilities | Training resolution limits requiring upscaling workarounds | Models optimized per quality tier (draft, delivery, print) |

| Parameter Controls | Inconsistent seed support, CFG availability, advanced negative prompting handling | Selection based on reproducibility and control requirements |

Documented Workflow Impacts:

- Platform content requiring non-native aspect ratios: Composition loss through cropping

- Series production needing specific durations: Content planning constrained by model limitations

- Multi-format campaigns: Regeneration required versus seed-based derivatives

- Reproducibility requirements: Model lacking seed support forcing workflow abandonment

Strategic Model Matching: Platform-specific selection deploys models natively supporting destination requirements rather than forcing post-generation format adaptation.

Hidden Constraint 3: Workflow Stage Mismatches

Single-Model Reality: Individual models optimize either exploration velocity OR final quality, rarely both. Quality-focused processing extends timelines; speed-optimized outputs lack polish.

Constraint Manifestations:

Quality-First Single Model Approach:

- Problem: Premium processing allocated during exploratory concept testing

- Impact: Limited creative exploration (3-5 attempts) within fixed budgets

- Result: First acceptable output chosen versus optimal validated direction

Speed-First Single Model Approach:

- Problem: Fast model outputs lack delivery-standard polish

- Impact: Extensive post-production required or client dissatisfaction

- Result: Time savings during generation lost to enhancement overhead

Multi-Model Stage Matching:

- Exploration: Fast models (Veo Fast, Kling Turbo) enable 15-20 concept tests

- Validation: Comparative review identifying strongest 2-3 directions

- Finals: Quality models (Veo Quality, Sora Pro) with locked seeds regenerate validated concepts

- Enhancement: Targeted Topaz/Luma refinements elevate efficiently

Measured Advantages: Staged workflows produce 3-5x exploration volume while maintaining equivalent final quality versus single-model universal application.

Hidden Constraint 4: Specialization Task Gaps

Single-Model Reality: No universal model excels at ALL creative requirements-character consistency, commercial products, social energy, cinematic narratives, precise text rendering simultaneously.

Gap Categories and Multi-Model Coverage:

Character Consistency Across Series:

- Single model: Visuaseed controlss episodes despite prompt consistency

- Multi-model: Ideogram Character + Flux seed control maintaining identity systematically

Commercial Product Precision:

- Single model: Artistic interpretation inappropriate for e-commerce

- Multi-model: Flux 2 photorealism + Qwen Edit targeted refinements

Social Media Motion Energy:

- Single model: Narrative-focused motion underperforming algorithmically

- Multi-model: Kling 2.5 Turbo social optimization matching platform characteristics

Professional Polish Requirements:

- Single model: Speed variants lacking client-delivery quality

- Multi-model: Fast exploration → Veo Quality validated finals pipeline

Text Rendering Accuracy:

- Single model: Inconsistent typography across attempts

- Multi-model: Ideogram text specialization + alternative fallbacks

Strategic Deployment: Build model performance profiles documenting strengths per task category. Deploy specialized engines matching requirements rather than forcing universal application.

When Single-Model Focus Remains Superior

Appropriate Single-Model Contexts:

Established Workflow Mastery:

- Deep model proficiency (6+ months intensive use)

- Documented prompt-seed libraries with proven success rates (>70% first-attempt)

- Task requirements matching model specialization precisely

- Consistent output quality meeting all project needs

Simple Pure Requirements:

- Static imagery exclusively (ImageGen model sufficient)

- Single platform destination (format-matched model)

- Stylistic signature desired (artistic consistency across portfolio)

- Budget constraints preventing multi-tool subscriptions

Learning Phase Optimization:

- Initial 2-3 months: Master single model thoroughly

- Build foundational prompting skills and parameter understanding

- Establish baseline workflow competency

- Then expand strategically to complementary models addressing identified gaps

Team Coordination Requirements:

- Small teams benefiting from standardized single-tool workflows

- Reduced training overhead versus multi-model proficiency expectations

- Consistent quality through unified approach

Decision Framework: Remain single-model when specialization matches 80%+ of project requirements and expansion introduces coordination complexity exceeding capability gains.

Multi-Model Expansion Decision Framework

Expand Model Toolkit When:

Output Limitation Signals:

- Identical prompts consistently produce suboptimal results in single model

- Creative concepts abandoned due to perceived impossibility (actually model limitation)

- Extensive post-production required compensating for generation gaps

- Client feedback patterns indicating stylistic or quality mismatches

Workflow Efficiency Signals:

- Quality-first approach exhausting budgets before validation

- Speed-first outputs requiring extensive enhancement overhead

- Format adaptation introducing composition or quality compromises

- Series production exhibiting visual consistency challenges

Project Diversity Signals:

- Client requirements spanning styles (photorealistic + artistic)

- Platform destinations demanding varied motion characteristics (social energy + professional polish)

- Multi-format campaigns requiring aspect ratio and duration flexibility

- Specialization needs (character consistency, text rendering, physics accuracy)

Scaling Requirement Signals:

- Output volume demands exceeding single-model exploration capacity

- Team coordination requiring parallel specialized workflows

- Campaign complexity necessitating staged exploration → validation → finals architecture

Strategic Expansion Approach:

- Identify Primary Limitation: Which constraint categories affect current workflows most?

- Test Complementary Model: Single addition addressing identified gap specifically

- Validate Improvement: Measure output quality, workflow efficiency, completion rates

- Document Integration: Establish when each model deploys optimally

- Expand Systematically: Add models addressing remaining gaps sequentially versus scattered adoption

Measurement: Single vs Multi-Model Output Analysis

Comparative Metrics:

Output Variety:

- Single-model: 1 viable interpretation per 3-5 attempts average

- Multi-model: 2-3 viable interpretations per equivalent attempts (comparative selection)

Project Completion Rates:

- Single-model: 60-75% concepts reaching delivery standards

- Multi-model: 80-90% concepts achieving requirements (specialized tool matching)

Iteration Efficiency:

- Single-model: 4-6 regeneration cycles average per successful output

- Multi-model: 2-3 cycles via strategic initial model selection

Creative Exploration Volume:

- Single-model: 5-8 concepts tested within typical project timeline

- Multi-model: 12-18 concepts via fast-model exploration allocation

Timeline Predictability:

- Single-model: High (familiar processing patterns)

- Multi-model: Medium initially, high after proficiency (3-6 month learning curve)

Related Articles

- Single vs Multi-Model Platforms

- Single to Multi-Model Transition

- Multi-Model Scaling Strategy

- Model Selection Mistakes

Understanding single-model constraints, hidden capability gaps, and strategic multi-model expansion frameworks prevents output limitation misattribution. Master debugging creative AI pipelines expanding creative capacity through specialized tool matching rather than forcing universal single-engine application across mismatched requirements.