NVIDIA announced at CES 2026 that RTX GPUs can now generate 4K AI video locally - without cloud infrastructure - through a new pipeline combining the LTX-2 model from Lightricks with NVIDIA RTX Video upscaling technology and ComfyUI optimization. The announcement marks the first time consumer-grade hardware can produce 4K AI video entirely offline, closing a capability gap that had persisted since the emergence of cloud-based frontier models in 2024.

The announcement is significant for a specific creator segment: those who prefer local generation for privacy, latency, or cost reasons. For mainstream commercial production, cloud-based frontier models (Kling 3.0, Veo 3.1) remain the quality standard - but local AI video generation now reaches a specification that was previously impossible without enterprise infrastructure.

The LTX-2 + NVIDIA Pipeline

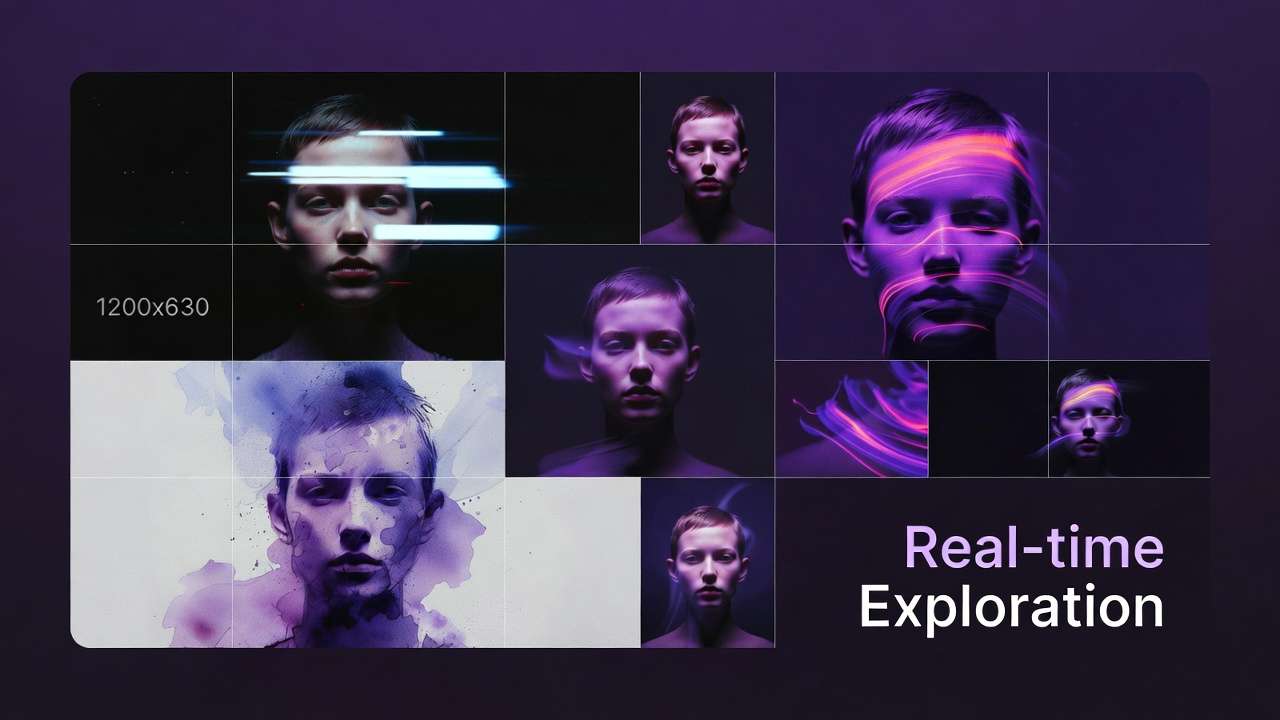

LTX-2, released by Lightricks simultaneously with the NVIDIA CES announcement, is described as a "major milestone for local AI video creation" - a model that generates up to 20 seconds of video with native 4K support via NVIDIA RTX upscaling, built-in audio, and multi-keyframe support.

The full pipeline:

- LTX-2 model generates the base video with controllability via keyframe anchoring and conditioning LoRAs. Keyframe support lets creators lock specific frames (opening, closing, or midpoint) to guide composition and motion - a feature that brings local generation closer to the control available in cloud models like Kling 3.0.

- NVIDIA RTX Video technology upscales the output to 4K resolution. The upscaler is trained specifically for video, preserving temporal consistency rather than applying per-frame image upscaling that can introduce flicker.

- ComfyUI provides the workflow interface, optimized 40% on NVIDIA GPUs with recent updates. ComfyUI's node-based workflow is the standard for power users running Stable Video Diffusion, AnimateDiff, and other local models; LTX-2 slots into that ecosystem.

NVIDIA claims the pipeline generates 4K video "3x faster" than previous local generation approaches and works on "a fraction of the VRAM" that previous 4K generation would have required. The specification requirement is an RTX-class GPU with sufficient VRAM - NVIDIA's announcement targets GeForce RTX, RTX PRO, and DGX Spark devices. Exact VRAM requirements were not specified at CES, but RTX 4070 (12GB) and above are expected to run the pipeline; RTX 4090 (24GB) would provide headroom for longer generations.

Comparing Local vs. Cloud for 4K AI Video

LTX-2's local 4K uses AI upscaling from a lower base resolution - technically 4K output, but not natively generated at 4K. Cloud-based Kling 3.0 generates natively at 4K/60fps. The distinction matters for production quality at close inspection (native 4K preserves more fine detail), less so for web and social delivery where compression reduces the practical difference. The AI video resolution guide explains when 4K matters for delivery.

| Attribute | Local (LTX-2 + NVIDIA) | Cloud (Kling 3.0 via Cliprise) |

|---|---|---|

| 4K type | Upscaled via RTX Video | Native generation |

| Hardware required | RTX GPU ($400-$800+) | Browser/app only |

| Privacy | Fully local | Cloud processing |

| Generation speed | Fast on capable hardware | 30-120 sec via API |

| Cost model | Hardware upfront, then free | $9.99/mo |

| Audio generation | Built-in (LTX-2) | Native (Kling 3.0) |

What It Means for Creators

The pipeline primarily serves a creator segment that has been unable to access high-quality AI video generation for privacy or latency reasons - financial industry professionals, healthcare adjacent content, legal content, and other fields where cloud processing of sensitive content is a concern. For these users, the choice was previously binary: use cloud models and accept data leaving their environment, or use lower-quality local models. LTX-2 + NVIDIA narrows that gap.

Privacy-first workflows: Content involving confidential footage, proprietary product designs, or regulated subject matter (healthcare, legal) often cannot be sent to cloud APIs. LTX-2 enables 4K-quality output without data leaving the machine. Corporations with strict data governance policies can now adopt AI video generation for internal communications and training materials.

Latency-sensitive use cases: Real-time or near-real-time generation (live event overlays, interactive experiences) benefits from local processing. Cloud round-trip adds 30-120 seconds per generation; local pipeline reduces that to seconds on capable hardware. For applications where generation time blocks user flow, local execution is the only viable path.

Long-term cost optimization: Creators generating high volume (hundreds of videos per month) may find that upfront GPU investment pays off versus ongoing cloud credit costs. The breakeven depends on volume and model mix - the cost optimization guide covers cloud cost structuring for comparison.

For the broader creator and commercial production market, cloud-based frontier models continue to provide the best quality-to-cost-to-accessibility ratio without hardware investment requirements. Most creators don't have an RTX 4070 or better; cloud access via Cliprise requires only a browser.

ComfyUI Updates and Ecosystem Impact

Alongside the LTX-2 and RTX Video announcement, NVIDIA confirmed 40% performance optimization across ComfyUI on NVIDIA GPUs, and the ComfyUI update adds support for NVFP4 and NVFP8 data formats - technical improvements that also benefit all other models run through the ComfyUI workflow environment. NVFP4 and NVFP8 are lower-precision formats that reduce memory footprint and increase throughput; the optimization affects not just LTX-2 but Stable Video Diffusion, AnimateDiff, and any model the community ports to ComfyUI.

The ComfyUI ecosystem has become the de facto standard for local AI video experimentation.

When to Choose Local vs. Cloud

The LTX-2 + NVIDIA pipeline doesn't replace cloud-based frontier models - it serves a different segment. Creators who prioritize privacy (confidential footage, regulated industries), latency (real-time or near-real-time generation), or long-term cost (high volume, hardware already owned) benefit from local generation. Creators who prioritize quality-to-cost ratio without hardware investment, access to the latest models (Sora 2, Kling 3.0, Veo 3.1), and minimal setup friction benefit from cloud access via Cliprise or similar platforms. Hybrid workflows are viable: local generation for sensitive or latency-critical content, cloud for everything else. The single vs multi-model platforms guide covers cloud platform evaluation.

Creators who already own RTX hardware should evaluate LTX-2 for privacy-sensitive or latency-sensitive use cases; those starting from zero should factor GPU cost (~$600-1200 for capable hardware) against 12-24 months of cloud credits at $9.99-29.99/mo before committing to local infrastructure. Lightricks (LTX-2 developer) has positioned the model for prosumer and professional creators who prefer local workflows; the ComfyUI integration ensures compatibility with the broader ecosystem. For creators evaluating the build-vs-buy decision, the cost optimization guide provides cloud cost benchmarks. LTX-2 represents the first viable local 4K option for consumer hardware; prior local models were limited to 1080p or required enterprise-grade hardware.

Related: