Stable Diffusion is powerful. It is also a project that can consume days of setup time before you generate a single image - GPU compatibility, Python environments, ComfyUI configuration, model downloads, VRAM management. Once it works, it is remarkably capable. Getting it to work is the problem most people run into.

Hosted alternatives solve the setup problem at the cost of some customisation depth. This guide explains what those trade-offs are honestly, which Cliprise models most closely match what people use Stable Diffusion for, and when self-hosted is still the right choice.

What Stable Diffusion Offers That Hosted Platforms Don't

Be honest about this before choosing.

LoRA fine-tuning. The Stable Diffusion community has produced thousands of LoRA models - fine-tunes that produce specific artistic styles, characters, aesthetic filters, and content types with remarkable precision. If you need a very specific visual style or character consistency that requires fine-tuning, self-hosted Stable Diffusion or platforms that support LoRA uploads are the right tool.

Custom checkpoints. SD community checkpoints like Juggernaut, DreamShaper, SDXL Turbo variants - each produces distinct output characteristics. Self-hosted lets you switch between these instantly.

ComfyUI workflows. Advanced node-based workflows, inpainting pipelines, ControlNet pose and depth conditioning, batch automation - ComfyUI's flexibility is genuinely beyond what most hosted platforms offer.

Unlimited generation. Self-hosted with your own GPU has no per-generation cost beyond electricity.

If any of the above are central to your workflow, self-hosted Stable Diffusion is probably still the right choice.

What Hosted Platforms Offer That Self-Hosted Doesn't

Zero setup. Open a browser tab, generate an image. No Python, no CUDA, no driver conflicts, no 40GB model downloads.

No hardware requirements. Works on any device including phones and tablets. Macbooks with integrated graphics. Low-end laptops. Hardware that can't run Stable Diffusion can run Cliprise.

Access to closed models. Midjourney, Kling, ElevenLabs, Google Veo, OpenAI Sora - none of these are available in the open-source ecosystem. Cliprise includes all of them alongside the open-weight-equivalent models.

Reliability. No crashes, no out-of-memory errors, no generator queue management. The platform handles infrastructure.

No maintenance. Stable Diffusion requires ongoing updates, dependency management, and occasional troubleshooting. Hosted platforms update models automatically.

Cliprise Models Closest to Stable Diffusion Use Cases

Photorealistic Generation (SDXL / Juggernaut equivalent)

Flux 2 - The closest hosted equivalent to what Stable Diffusion SDXL produces at its best. Photorealistic portraits, products, architectural scenes, food photography. Material rendering accuracy matches or exceeds most SDXL checkpoints without fine-tuning.

The practical difference: Flux 2 without fine-tuning vs SDXL with a well-configured Juggernaut checkpoint is close. Flux 2 may produce slightly softer edge detail in some cases; SDXL with the right LoRA stack may produce more stylistically distinctive output.

Artistic and Illustrated Styles (Community SD LoRA equivalent)

Midjourney for high-aesthetic artistic output. Produces images that feel styled and considered rather than literally rendered - what the SD community achieves with artistic LoRAs and aesthetic model variants.

Seedream 4.5 / 5.0 Lite for illustrated and anime-adjacent styles - what SD users commonly achieve with anime-style fine-tunes.

Ideogram v3 for designs with text integration - a capability that requires specific SD workflows to achieve reliably.

For Inpainting and Image Editing (SD inpainting equivalent)

Flux Kontext - AI image editing that modifies specific elements of existing images. Not identical to SD inpainting workflows (which operate at the pixel level with mask selection), but achieves similar outcomes - changing backgrounds, objects, and elements in existing images - without the mask-drawing workflow.

How to Start on Cliprise

If you are coming from Stable Diffusion:

-

Your SD prompts will mostly work. The prompt vocabulary - lighting descriptors, style references, quality boosters - transfers directly. You may need to experiment less with quality terms that SD specifically responds to ("masterpiece, best quality") and more with direct quality descriptors ("professional photography, high detail, sharp focus").

-

You do not need negative prompts for basic use. SD users rely heavily on negative prompts to suppress common artifacts. Cliprise models - particularly Flux 2 and Midjourney - produce cleaner outputs by default. Try without negatives first; add targeted negatives only if specific issues appear.

-

Seed control works the same way. Lock a seed to reproduce results. See Seeds & Consistency →

-

CFG scale is available on most models. See CFG Scale Guide →

Direct Comparison: Self-Hosted vs Cliprise

| Factor | Self-hosted SD | Cliprise |

|---|---|---|

| Setup time | Hours to days | Zero |

| Hardware required | 16GB+ GPU | None |

| LoRA / fine-tuning | Full support | Not available |

| ComfyUI workflows | Full support | Not available |

| Closed models (Midjourney, Kling, etc.) | Not available | Included |

| Per-generation cost | Electricity only | Credits / subscription |

| Reliability | Dependent on local setup | Managed infrastructure |

| Mobile access | Limited | Full (iOS + Android) |

Note

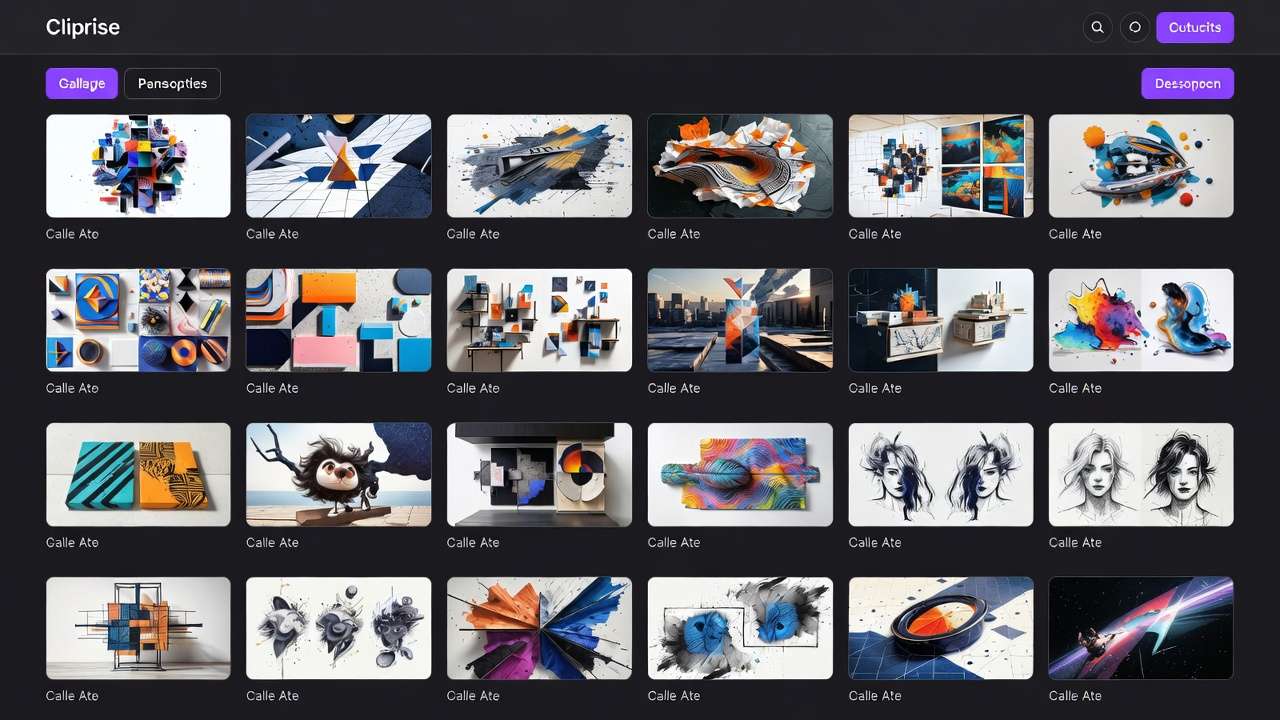

Flux 2, Midjourney, Ideogram v3, and 45+ models on Cliprise. No GPU, no setup, works on any device. Try Cliprise Free →

Related Articles

- Stable Diffusion Alternative 2026: Hosted AI Image Generation →

- Cliprise vs Stable Diffusion →

- AI Image Generation 2026: Complete Guide →

- How to Create AI Images: Step-by-Step →

- AI Prompt Engineering 2026 →

- CFG Scale Guide →

- Seeds & Consistency →

Models on Cliprise: