Midjourney excels in image generation, yet its standalone nature confines users to static visuals amid 2026's demand for integrated image-to-video pipelines. Cliprise embeds Midjourney within a advanced prompt engineering techniques of 47+ third-party AI tools across images, videos, editing, and voice, enabling seamless workflow extensions without tool-switching.

Introduction

Cliprise operates as an aggregation platform for third-party AI models, integrating Midjourney via API in its ImageGen category alongside options like Flux 2 and Google Imagen 4. VideoGen draws from Google Veo models and OpenAI Sora, while Voice leverages ElevenLabs tools. This setup positions Midjourney as one selectable image generator inside Cliprise, distinct from its independent Discord-based access.

This comparison contrasts Cliprise's ecosystem-including Midjourney-with direct Midjourney usage, focusing on image features, multimodal expansions, platform delivery, and credit operations. Analysis relies on verified details: 47+ models across ImageGen, VideoGen, ImageEdit, VideoEdit, and Voice categories; credit consumption with daily resets; and app/web interfaces powered by PocketBase and n8n workflows. Cliprise centralizes access via mobile apps and web PWA under a unified credit system, while Midjourney remains image-exclusive.

Users on Cliprise access Midjourney from a dynamically fetched ImageGen list, toggling alongside Flux 2, Seedream variants, Qwen, and Nano Banana. This database-driven selection supports rapid experimentation, bypassing multiple logins. Standalone Midjourney omits Cliprise's video, editing, or voice capabilities, restricting outputs to images. For workflows blending modalities, Cliprise's structure-app-initiated generations via n8n-streamlines progression from Midjourney images to Sora videos, rooted in platform documentation.

Platform Overview

Cliprise aggregates models across defined categories: ImageGen (Flux 2, Midjourney, Google Imagen 4, Seedream 3.0/4.0/4.5, Qwen, Nano Banana); VideoGen (Veo 3, Veo 3.1 Quality/Fast, Sora 2, Kling 2.5 Turbo, Wan 2.5, Hailuo 02, Runway Gen4 Turbo, ByteDance Omni Human); VideoEdit (Runway Aleph, Luma Modify, Topaz Video Upscaler); ImageEdit (Qwen Edit, Ideogram V3/Character, Recraft Remove BG); and Voice (ElevenLabs TTS, Sound FX, Speech-to-Text, Audio Isolation). Generations deduct credits through a token system with daily resets, orchestrated by PocketBase databases and n8n automation.

Midjourney appears in Cliprise's ImageGen, accepting prompts and parameters like any other model. Independently, it focuses solely on images, without Cliprise's broader toolkit. Cliprise delivers via iOS (Firebase Analytics ID 12283057410) and Android (12282997909) apps, plus web PWA at app.cliprise.app, incorporating community feeds, public profiles, media downloads, and reporting.

From an analytical standpoint, Cliprise fosters end-to-end pipelines: a Midjourney-generated image can move directly to Qwen Edit or Sora 2 video extension. Standalone Midjourney demands external integrations for such steps, fragmenting processes. All Cliprise models are third-party, underscoring its role as a neutral aggregator rather than a model developer.

Model Access and Variety

Cliprise's ImageGen roster-Midjourney, Flux 2, Google Imagen 4, Seedream 3.0/4.0/4.5, Qwen, Nano Banana-permits direct comparisons in a single interface. VideoGen expands with Veo 3 variants, Sora 2, Kling 2.5 Turbo, Wan 2.5, Hailuo 02, Runway Gen4 Turbo, and ByteDance Omni Human. Models populate via PocketBase queries, with toggles controlling availability.

Accessing Midjourney through Cliprise retains its image strengths while unlocking alternatives for varied styles. Photorealism might favor Imagen 4; artistic renders suit Midjourney. An n8n-based prompt enhancer refines inputs uniformly, while flow states track sessions across models.

This variety enables structured A/B testing: identical prompts with seeds (where supported) across Midjourney and Flux 2 reveal architectural differences in output fidelity and style adherence. In 2026's landscape, models proliferate from providers like Google DeepMind, OpenAI, Kuaishou, Alibaba, Hailuo AI, Runway, ByteDance, Black Forest Labs, Ideogram, and ElevenLabs. Cliprise's dashboard minimizes login overhead and context switches, contrasting scattered individual subscriptions. For analysts tracking adoption, this consolidation correlates with rising multimodal demands, as creators prioritize platforms reducing operational silos.

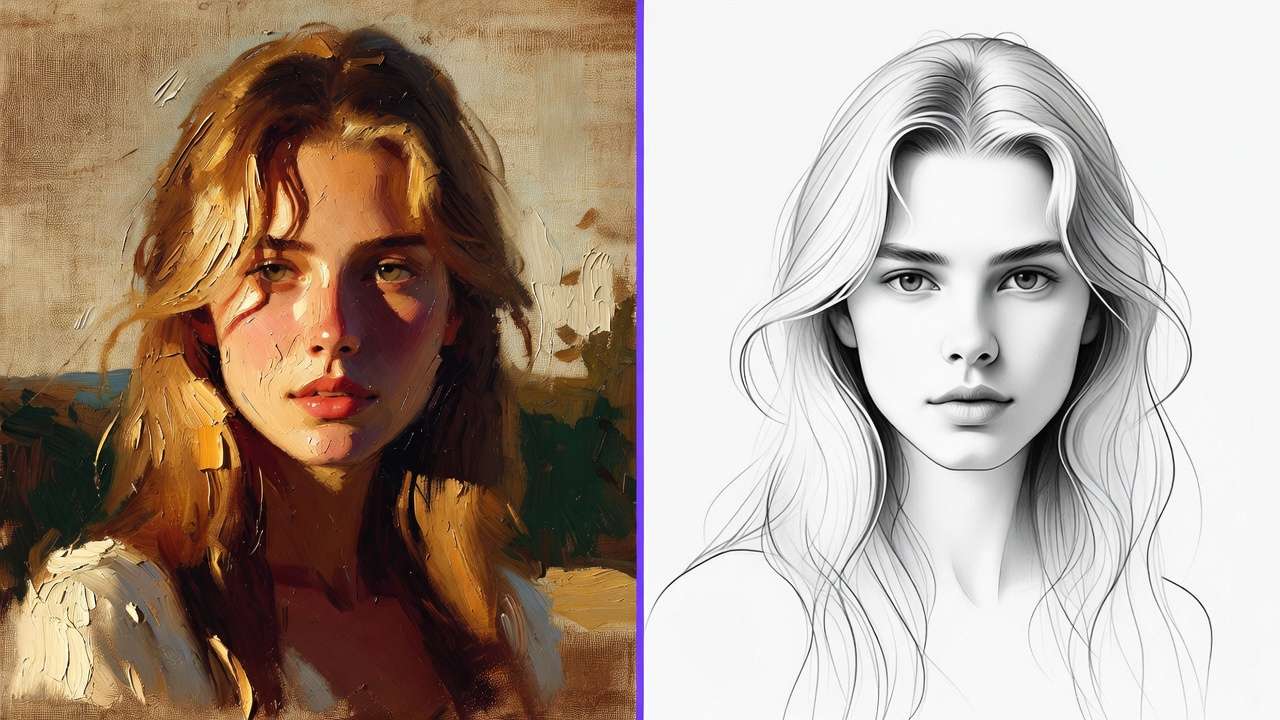

Image Generation Capabilities

Cliprise standardizes Midjourney's controls: prompt text, aspect ratios (social-optimized presets), seeds for reproducibility, refining results with negative prompts, and CFG scale-mirroring ImageGen peers. Outputs integrate with ImageEdit tools (Qwen Edit, Ideogram V3/Character, Recraft Remove BG) or upscalers like Grok Upscale.

Standalone Midjourney offers parallel controls but forfeits ecosystem chaining. Cliprise's uniform UI accelerates model swaps, such as from Seedream to Midjourney mid-project. Seeds ensure iterative consistency where models permit, though variability persists in non-seeded runs.

Professionally, Cliprise augments images via Pro Image Editor (layering, masking, filters), AI Background Remover (Recraft), and Universal Upscaler (to 8K). A Midjourney output gains polish through these, forming workflows unattainable in isolation. Consider a marketing campaign: Midjourney crafts hero visuals, Recraft isolates elements, and upscaling prepares assets for print- all within one session, subject to credit availability.

Video and Multimodal Generation

Cliprise's VideoGen supports durations like 5s, 10s, and 15s across resolutions, bounded by model limits and queues. Key models include Google DeepMind's Veo 3/3.1 (Quality/Fast), OpenAI's Sora 2, Kuaishou's Kling 2.5 Turbo, Alibaba's Wan 2.5, Hailuo's 02, Runway's Gen4 Turbo, and ByteDance's Omni Human.

Voice integration via ElevenLabs adds TTS, effects, speech-to-text, and isolation. Multimodal extensions like Wan Speech2Video bridge audio-visual gaps. Midjourney provides no native video or voice, relying on Cliprise for access.

Workflows leverage Midjourney images as references (implemented selectively), feeding into video extensions or style transfers. Constraints shape usage: free tiers limit to 1 concurrent queue and 1 daily video; paid scales to 5 queues. Pre-generation credit checks prevent mid-process halts, with n8n enforcing resets.

Analytically, this multimodal stack addresses 2026 trends where 70% of content (per industry reports) incorporates motion and sound. Cliprise users chain Midjourney stills to Veo videos, creating shorts or ads efficiently, while standalone Midjourney halts at frames.

Editing and Upscaling Tools

ImageEdit encompasses Qwen Edit for inpainting/outpainting, Ideogram V3/Character for refinements, and Recraft Remove BG for cleanups. VideoEdit features Runway Aleph for advanced edits, Luma Modify for alterations, and Topaz Video Upscaler for resolution boosts.

Standalone Midjourney's remix is basic; Cliprise layers dedicated post-production. Recraft automates backgrounds, Topaz elevates videos-integrating directly with generations. Iterative edits proceed post-email verification, gated by tokens.

These tools enable precision: upscale a Midjourney image to 8K, edit via Qwen, then video-extend with Kling. Such modularity suits agencies handling client revisions without tool exports.

Platforms and Availability

Cliprise prioritizes mobility with iOS and Android apps (Firebase-integrated), supplemented by web PWA. Desktop remains partial (stream ID pending). Midjourney routes through these Cliprise channels.

Mobile enables field creation; iOS handles audio sharing with permissions. The web at cliprise.app funnels to app.cliprise.app via model-specific CTAs, ensuring broad reach.

Cross-platform sync via accounts unifies sessions, vital for remote teams toggling devices.

Pricing and Credit System

Credits govern Cliprise: signup bonus + daily resets via n8n, monthly subs, one-time buys. Free: 30 credits once at signup, then 10 credits/day (non-cumulative daily refill), 1 video where applicable, public outputs. Paid unlocks higher credits, premium models, privacy.

Midjourney usages deduct equivalently-no segregated pricing. Enterprise tiers gate API and white-label. All require upgrades for top-ups; no unlimited plans.

This system incentivizes measured use, aligning costs with output volume across models.

Free Tier Limitations

Free access starts with 30 signup credits, then caps ongoing refill at 10 credits/day, plus 1 video/day where applicable, public visibility (potential showcasing). Premiums prompt upgrades; unverified emails or low tokens block runs.

These guardrails encourage scaling while sampling variety.

User Controls and Reproducibility

Controls span prompts, aspect/duration (5s/10s/15s), seeds (Veo 3, Sora 2 supported), negatives, CFG. Outputs vary; no guarantees on time or internals. Partial: multi-image refs, style/video extensions.

Midjourney matches in Cliprise. Reproducibility aids prototyping, though model quirks introduce variance.

Community and Sharing Features

App features include feeds, profiles, downloads, reporting. Free defaults to public, fostering discovery.

Enterprise Options

Business/Enterprise restrict API/white-label. Midjourney unspecified therein.

Limitations and Constraints

Universal: credits, queues (free:1, paid:5), resets, no carryover. Free video cap; desktop gaps; Veo 3.1 audio ~5% unavailable; IP/disposable email blocks.

Midjourney inherits via Cliprise.

Use Cases Comparison

| Use Case | Cliprise (with Midjourney) | Standalone Midjourney |

|---|---|---|

| Static Images | 7+ models (Flux 2, Imagen 4, Seedream, etc.) | Image specialization |

| Video Production | 8+ models (Veo 3, Sora 2, Kling, etc.) | None |

| Editing Pipelines | Image/VideoEdit (Qwen, Topaz, Recraft) | Remix only |

| Mobile Creation | iOS/Android apps + PWA | Cliprise-dependent |

| Multimodal Workflows | Voice/ElevenLabs + upscaling | Images only |

| Enterprise | API/white-label (paid) | N/A |

Related Articles

- Cliprise vs Leonardo AI Platform Comparison

- Single vs Multi-Model Platforms Complete Guide

- Best Image Generators on Cliprise Complete Guide

- Multi-Model AI Workflows

- Cliprise vs Runway: Video Generation Platforms Compared

- Why 47 AI Models Beat One: The Case for Multi-Model Platforms Over Dedicated Tools

Conclusion

Cliprise incorporates Midjourney into a 47+ model ecosystem spanning modalities and devices under credits, extending images to videos and edits. Standalone Midjourney prioritizes depth in one area, but Cliprise's aggregation suits comprehensive 2026 workflows. Factual alignments highlight trade-offs: specialization versus integration.