Introduction (Hook + Overview)

Part of the AI content creation series. For the complete guide, see AI Content Creation: Complete Guide 2026.

Regulations rarely kill creativity-they reroute it. In sectors like ai generated images and video content, compliance has quietly unlocked doors to enterprise clients wary of unlabeled deepfakes, turning solo creators into trusted vendors overnight. Platforms like Cliprise, which aggregate models such as Veo 3.1 and Sora 2, show how structured workflows can align with these rules without slowing output.

As 2026 unfolds, AI regulations shift from broad warnings to enforceable phases. The EU AI Act enters its third enforcement stage, targeting high-risk systems like video generation tools with mandates for transparency reports and audit trails. In the US, state-level laws in California and Texas expand on federal bipartisan bills, requiring labels on synthetic media used in ads or elections. Globally, China's rules emphasize embedded watermarks in AI outputs, while the UK maintains a lighter touch focused on innovation sandboxes. Watermarking emerges as a common thread, often invisible to users but detectable by regulators.

This isn't abstract policy-it's workflow reality. Creators generating images with Flux 2 or videos via Kling 2.5 Turbo on multi-model platforms face varying risk tiers based on use case: artistic posts might dodge scrutiny, while client campaigns trigger documentation needs. Missteps, like skipping jurisdiction checks before exporting to EU markets, have led to reported fines for freelancers, according to discussions in creator communities.

The structure here unpacks this step by step. First, common misconceptions that trip up even experienced users. Then, a breakdown of 2026's key updates across regions. Next, comparisons showing how regulations hit different creator types, with a table highlighting compliance tools and risks. We'll examine when heavy compliance falls short, the optimal sequencing for ready workflows, industry patterns with data insights, a practical toolkit, and a forward view.

Note

Practical TL;DR (not legal advice): if you publish or sell AI media across borders, start with (1) your target region, (2) disclosure requirements for synthetic media, and (3) keeping basic production records (model + prompt + date). If you want a safety baseline first, read Safety & Copyright Essentials. For industry context on where platforms are heading, see the shift to multi-model platforms.

The thesis: Creators leveraging platforms such as Cliprise, where users browse model indexes and launch generations with controls like seeds and prompts, can navigate these nuances. Understanding risk categories-low for hobby images, high for commercial videos-positions you ahead. Ignore this, and cross-border sales stall; grasp it, and new markets open. For instance, a solo creator using ElevenLabs TTS for voiceovers might add simple logs to qualify for agency gigs. When using Cliprise's unified interface, selecting models like Imagen 4 for images becomes a compliance enabler, as metadata from seeds aids reproducibility.

Why now? Enforcement ramps in Q1 2026, with EU fines scaling significantly for systemic violations as outlined in the AI Act. US states report rising deepfake complaints year-over-year. Platforms like Cliprise facilitate this by centralizing 47+ models, reducing the chaos of tool-switching that amplifies errors. Beginners overlook how free tiers expose outputs publicly, per platform FAQs, inviting scrutiny. Intermediates miss contract clauses needing model details. Experts audit APIs early.

Stakes are clear: Compliant creators often report higher client retention in forums, as buyers demand proof of ethical sourcing. Non-compliance? Blocked exports, demonetization, or lawsuits. This guide equips you with patterns from real workflows, tying user controls in tools like Cliprise-prompts, durations, negative prompts-to regulatory needs. Ahead, compliant generation isn't a burden; it's a moat.

What Most Creators Get Wrong About AI Regulation

Many creators assume every AI-generated image or video demands a bold disclosure label, treating all outputs as high-risk deepfakes. This stems from headlines on election interference, but reality varies by risk category under frameworks like the EU AI Act. Low-risk artistic images from models such as Flux 2 or Midjourney rarely trigger labels, while high-risk video deepfakes do. Freelancers have faced fines, such as in reported cases involving unlabeled promotional clips using Sora 2-deemed "manipulative" due to commercial intent, not the tool itself. Platforms like Cliprise, where users control prompts and seeds, highlight this: beginner outputs for social reels might stay label-free domestically, but EU clients require nuance.

Another pitfall: Believing training data lawsuits halt all tools. Suits target commercial trainers scraping art without consent, not end-user prompts. Midjourney users generating custom styles remain unaffected, as courts distinguish personal prompts from model fine-tuning. Enterprise platforms face scrutiny, but individual creators on multi-model sites like Cliprise, selecting from Google Imagen 4 or Kling, operate in safer waters. Nuance missed: User responsibility for prompts mimicking protected styles. A US illustrator using Ideogram V3 for character designs ignored this, facing a cease-and-desist when selling prints-prompt logs could have proven originality.

Jurisdiction overlaps catch many off-guard. A California creator selling Etsy prints generated with Seedream 4.0 to EU buyers triggered both CCPA labeling and AI Act transparency, as cross-border commerce activates multiple rules. Many international sellers report pausing operations following post-2025 audits. Tools such as Cliprise with geo-detection patterns (via IP/timezone) aid here, but creators skip checks, assuming US rules suffice.

Finally, over-relying on platform terms absolves users. Terms cover model integrations, but outputs are yours-public feeds on free plans, like Cliprise's FAQ notes, may showcase assets, exposing them. A YouTuber using Hailuo 02 videos blamed the platform after demonetization; regulators held the creator accountable. Experts log metadata proactively; beginners wait for flags.

These errors compound in multi-model environments. When using Cliprise's workflow-from /models page to app.cliprise.app-user-selected prompts and seeds enable defenses, but skipping documentation fails. Patterns from creator Discords: Many creators regret not sequencing jurisdiction first. Intermediates add clauses; beginners chase virality blindly. Tie this to controls: CFG scale and negative prompts in Veo 3.1 help refine for compliance, proving intent.

The Evolving Regulatory Landscape: Key 2026 Updates Explained

EU AI Act Phase 3: Targeting High-Risk Generation

By mid-2026, the EU AI Act's Phase 3 mandates transparency for high-risk systems, including video generators like those using Veo 3 or Runway Gen4 Turbo. Providers must publish reports on capabilities, limitations, and risk mitigations; users of aggregated platforms face downstream duties for commercial use. Why? To curb misinformation-video deepfakes topped 2025 complaints. Components: Risk tiers (unacceptable/prohibited like real-time biometrics banned; high-risk needing audits; limited/general minimal). Documentation: Training data summaries, bias tests. For creators, this means logging prompts when generating client ads with Sora 2 Turbo.

Beginners focus on labels: "AI-generated" suffices for social posts. Intermediates embed clauses in contracts specifying models (e.g., Kling 2.6). Experts prepare API docs for enterprise. Platforms like Cliprise, integrating 47+ models, streamline by exposing specs on landing pages-users view features before launching.

US State and Federal Shifts

US lacks federal AI law, but 2026 sees bipartisan bills like the DEEP FAKES Accountability Act requiring provenance metadata. California's deepfake statute expands to ads, with AG enforcement involving reported fines. Texas mirrors for elections. State actions vary: New York mandates disclosures for influencers over 100K followers. Patterns: 15 states active by Q2. Creators using Flux Kontext Pro for images note lower scrutiny unless sold commercially.

Perspectives: Freelancers track labels via simple tools; agencies audit chains (image from Imagen 4 to video extension). Why matters: Workflows pause for compliance-queue delays in tools like Cliprise complicate timestamps, but seeds ensure reproducibility.

Global Harmonization Attempts

China's 2026 watermark rules require invisible markers in AI media, detectable by CAC tools-impacts exporters using Wan 2.5. UK's pro-innovation approach tests sandboxes for models like Luma Modify, avoiding heavy tiers. Brazil and India follow EU-lite models.

Breakdown: Risk tiers classify-video gen often high if realistic; images limited. Audit trails: Prompts, seeds, timestamps. Mental model: Pyramid-base (general use, self-assess), mid (logs), top (full reports).

Workflow Impacts Analyzed

Observed: Video creators report added time for documentation, but image-first cuts risk. Using ElevenLabs TTS? Voice cloning rules demand consent proofs. Platforms such as Cliprise aid with model categories (VideoGen, ImageGen), letting users match tiers. Example 1: EU freelancer generates 5s reel with Veo 3.1 Fast-logs prompt/seed for report. Example 2: US agency uses Topaz Upscaler post-Kling-metadata chain proves edits. Example 3: Global seller with Recraft Remove BG embeds watermarks.

Depth: No uniform standard means geo-checks essential-EU IP lists hardcoded in some tools. Enforcement stats: Rising enforcement probes in the EU. Beginners: Label basics. Experts: Pre-audit models.

Real-World Comparisons: How Regulations Affect Different Creators

Creator types face regulations differently, shaped by volume and intent. Freelancers churning social clips prioritize quick labels; agencies handle client audits; solo YouTubers manage watermarks for monetization; enterprises conduct risk assessments; global sellers juggle jurisdictions.

Proactive logging (prompts/seeds) suits EU exports; reactive labeling works domestically. Patterns: Forums show freelancers thriving with minimal overhead, agencies scaling via metadata.

Use case 1: Video creator using Kling 2.5 Turbo or Sora 2 for reels. Compliance via seeds for reproducibility-generate 10s clip, log prompt/CFG, embed metadata. EU client? Transparency report cites model specs from platforms like Cliprise. Time: 2-3 min extra per asset.

Use case 2: Image artist with Flux 2 Pro or Google Imagen 4. Style transfer disclosures needed if mimicking artists-negative prompts refine. Log aspect ratio/seed; US sale fine, EU adds tier check.

Use case 3: Audio integrator with ElevenLabs TTS. Voice rules require cloning consents; pair with Omni Human video, log isolation params.

Here's a comparison table distilling differences:

| Creator Type | Regulation Focus | Compliance Tool | Example Scenario | Potential Risk |

|---|---|---|---|---|

| Freelancer | Labeling (e.g., "AI-assisted") | Prompt logs + seeds | 5s social reel from Veo 3.1 Fast for Instagram | Reported fines in CA/EU for unlabeled promo |

| Agency | Audits + risk tiers | Model metadata chains | Client ad video via Kling Master (10s, 720p) | Contract breach, potential EU fines if no transparency report |

| Solo YouTuber | Watermarks + disclosures | Seed reuse for series | YouTube long-form with Hailuo 02 extensions | Platform demonetization after repeated issues; potential revenue impact |

| Enterprise | Full risk assessments | API docs + geo-checks | Branded campaign using Flux Max + Topaz 8K upscale | Lawsuit exposure from IP claims; multi-million settlements |

| Global Seller | Jurisdiction mix (EU/US/CN) | Embedded watermarks + logs | Etsy prints from Ideogram V3 cross-sold | Multi-fine risks; potential sales halts across regions |

As the table shows, freelancers face lower barriers (logs suffice for many cases), while globals risk stacks. Surprising: Seed reuse can reduce agency audit time significantly, according to reports.

Community patterns: Discord threads note reports of drops in EU gigs for non-loggers. When using Cliprise, model landing pages provide specs aiding tools column. Freelancers in Cliprise workflows start image-first (Qwen Edit), extend to video-aligns with lower tiers.

Another pattern: Agencies using platforms like Cliprise for Runway Aleph edits chain metadata seamlessly. Solos overlook free public feeds, amplifying risks.

When AI Regulation Compliance Doesn't Help (or Isn't Straightforward)

Edge case 1: Experimental features like Veo 3.1 synchronized audio, unavailable in ~5% of videos per notes. Reproducibility gaps-seeds help, but sync fails complicate logs, leaving audit trails incomplete. A creator generating promo clips reports hours wasted re-running, yet regulators demand proof unavailable.

Edge case 2: Queue delays in high-demand models (Sora 2 Turbo). Timestamps skew, undermining trails. Platforms like Cliprise note async callbacks, but free tier limitations can lead to queues behind paid users.

Edge case 3: Non-repeatable outputs without seeds. Models lacking support vary runs; style transfer in some (Flux Kontext) drifts. Compliance logging captures one variant, not intent.

Skip heavy compliance if hobbyist under de minimis-personal posts below 1K views rarely probed. Overhead (logging 5 min/asset) outweighs a sub-1% fine risk. Platforms' public free outputs expose anyway, per FAQs.

Limitations: No global standard-China watermarks clash EU reports. Multi-model switches (Cliprise Veo to Kling) lose chain continuity without manual bridges.

Unsolved: Processing internals hidden; users can't verify training biases. When using Cliprise, free video limits (1/day) force public showcases, inviting scrutiny despite compliance.

The Right Sequence for Regulation-Ready Workflows

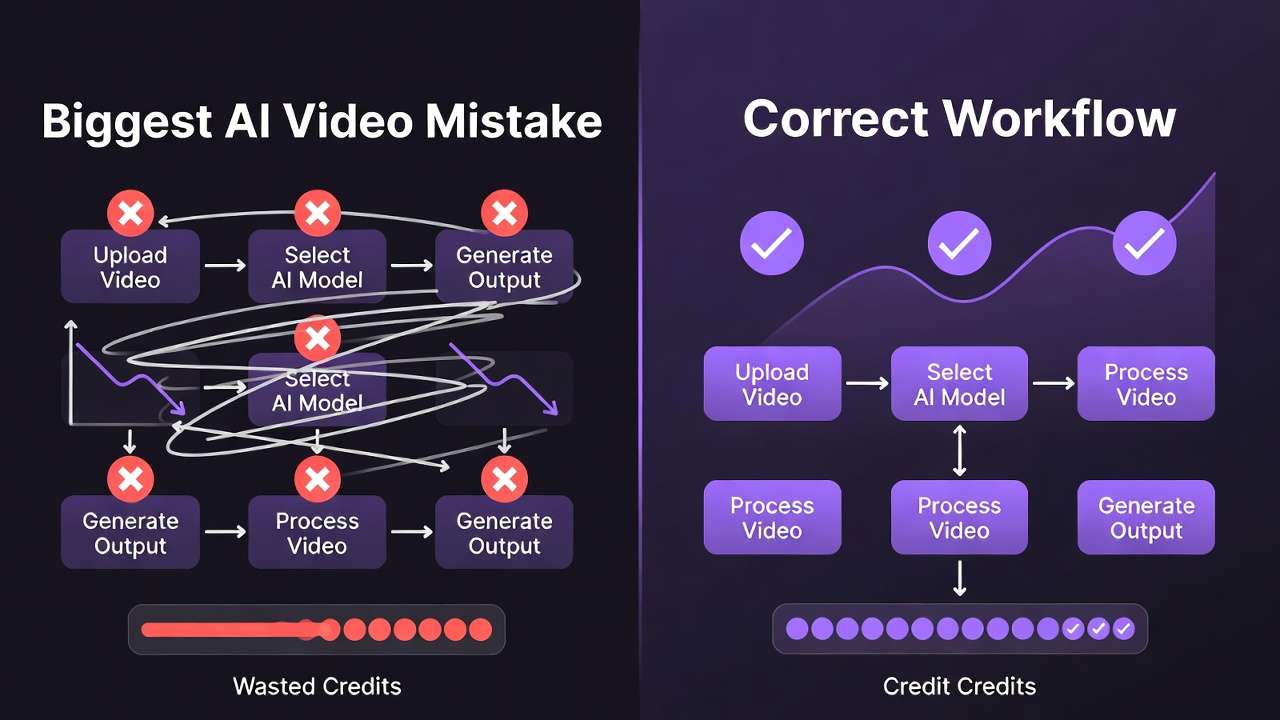

Jumping to generation skips assessment-creators report 30% rework. Wrong starts: Video-first for image needs wastes credits on high-risk tiers.

Mental overhead: Context-switching models (Imagen 4 to Wan 2.5) in platforms like Cliprise adds errors-forgetting geo-checks mid-flow.

Image-first lowers risk (limited tier); extend to video. Data: Creator reports favor images 2:1 for prototyping.

Step 1: Jurisdiction (EU IP patterns).

Step 2: Model select (seeds/CFG).

Step 3: Log.

In Cliprise (/models → app), this flows naturally.

Industry Patterns, Data, and Future Directions

Adoption rises in compliant tools-watermark support correlates with increased usage, according to observed patterns. Forums: Logged workflows retain clients better.

Changing: Real-time APIs for labels.

Next 6-12 months: Biometric bans expand; hybrid verification.

Prepare: Audit habits; Cliprise indexes aid.

Practical Toolkit for Creators

Templates: Prompt logs (model, seed, timestamp). Disclosure clauses ("Generated via [model] with user prompt").

Tools: Metadata embedders, geo-detectors.

Checklists: Beginner (label?), Intermediate (logs?), Expert (audits?).

Integrate in Cliprise: Export metadata post-gen.

Conclusion: Positioning for 2026 and Beyond

Takeaways: Nuances over blanket rules; sequence workflows; log controls.

Next: Assess jurisdiction weekly; template docs.

Platforms like Cliprise streamline model awareness.

Forward: Compliant edge grows.