Sora 2 is the most talked-about AI video model of 2026 - and for good reason. OpenAI's second-generation video model delivers cinematic quality, up to 60 seconds of consistent character-anchored video, a Storyboard mode that lets you sequence scenes like an actual director, and native audio generation that makes it a near-complete ai video creator production tool.

But "talked-about" and "well-documented" are different things. Most content about Sora 2 focuses on the output showcase - impressive clips, viral demos. Very little explains the actual workflow: how to access it, how Storyboard mode actually works, how to maintain character consistency across scenes, and what the real prompting patterns are that produce professional output.

This guide covers all of it. Access paths and pricing, Storyboard mode step-by-step, character reference workflows, prompting frameworks, native audio, and Remix and Blend features. By the end, you'll have a complete working knowledge of Sora 2 as a production tool - not just an understanding that it produces impressive output.

What Is Sora 2?

Sora 2 is OpenAI's second-generation text-to-video model, released December 2025. It represents a significant advancement over the original Sora on every meaningful production dimension.

Key capabilities:

- Video length: Up to 60 seconds (Sora Pro) - 10 seconds on ChatGPT Plus

- Resolution: 720p (ChatGPT Plus), 1080p (Sora Pro), 4K in staged rollout

- Storyboard mode: Multi-scene sequencing with consistency across shots

- Character consistency: Upload a reference image to anchor subject appearance

- Native audio: Ambient sound, music, and lip-sync generated alongside video

- Remix: Modify an existing generation by rewriting part of the prompt

- Blend: Merge elements from two different generations

What changed from Sora 1:

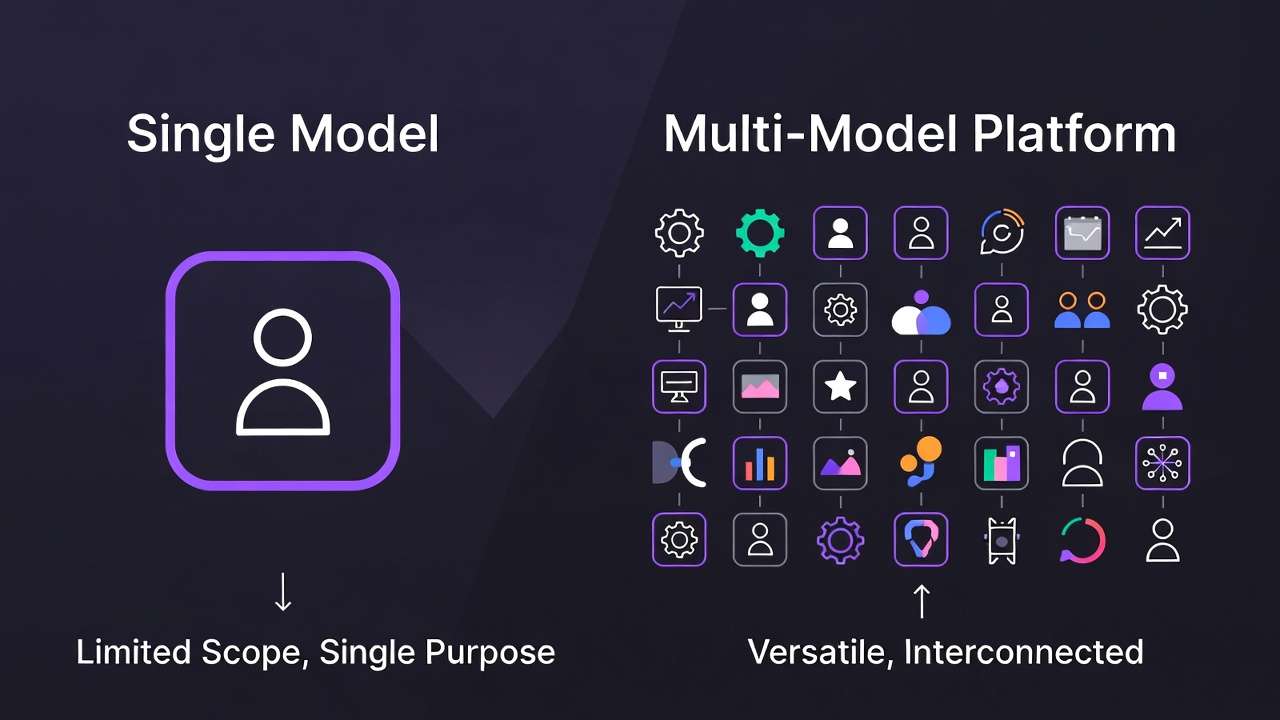

The original Sora was a research demonstration. Sora 2 is a production tool. The jump is significant: longer generation length, proper character consistency via reference images, Storyboard mode for multi-scene production, native audio, and substantially improved scene understanding and physical accuracy. The gap between the original demo videos that circulated in 2024 and what Sora 2 actually delivers for working creators is meaningful.

How to Access Sora 2

ChatGPT Plus ($20/mo)

The entry-level access path. ChatGPT Plus includes Sora 2 generation with:

- 720p resolution

- Up to 10-second generation length

- Visible watermark on exports

- Rate-limited generation volume (limited monthly renders)

- Access to Storyboard mode

Suitable for: learning the model, testing prompt behavior, lower-volume personal use. Not suitable for commercial production deliverables due to watermark and resolution cap.

Regional availability: US, Canada, Japan, South Korea natively. Access from other regions requires workarounds or platform aggregators.

Sora Pro ($200/mo - ChatGPT Pro)

Full production access via ChatGPT Pro subscription:

- 1080p resolution (4K in staged rollout)

- Up to 60-second generation length

- No watermark on exports

- Higher generation volume

- Priority queue access

- Full access to all Sora 2 features including Remix and Blend

Suitable for: professional production use, commercial deliverables, high-volume workflows.

Via Cliprise (Most Accessible Path)

The most cost-efficient access path for most creators. Cliprise integrates Sora 2 via API - same underlying model, same output quality - under the unified Cliprise credit system alongside Kling 3.0, Veo 3.1, Seedance 2.0, and 44 other models. Compare Sora 2 vs Runway Gen-4 for model choice.

Key advantages over direct access:

- Starts at $9.99/mo (vs $200/mo direct for no-watermark access)

- No regional restrictions

- One credit system shared with all models

- Watermark-free output on paid plans

Access: cliprise.app/pricing

For international creators or anyone who uses Sora 2 as one tool in a multi-model workflow - which in 2026 is most professional creators - Cliprise is the correct access architecture. See how to use Sora 2 for free for free and low-cost options.

Storyboard Mode: How It Actually Works

Storyboard mode is Sora 2's most powerful and most underutilized feature. It allows you to define a multi-scene video sequence - specifying different prompts for different moments - and generate a cohesive, visually consistent result across all scenes.

What Storyboard Mode Is

Think of it as a timeline editor for AI prompts. Instead of generating one continuous 60-second clip from a single prompt, you define multiple "beats" - moments in the video with distinct prompts - and Sora 2 generates a video that transitions between them while maintaining visual consistency.

This solves the most fundamental challenge of AI video for narrative work: how do you create a sequence that changes (different locations, different actions, different camera angles) while keeping the same character looking the same and the visual style consistent?

Step-by-Step: Using Storyboard Mode

Step 1: Enable Storyboard Mode

In the Sora 2 interface, select "Storyboard" from the generation mode options. The interface changes to show a timeline with multiple prompt slots instead of a single text field.

Step 2: Define Your Sequence Structure

Decide how many distinct moments your video needs. For a 60-second video:

- 2-3 beats work well for emotional storytelling

- 4-5 beats work for product sequences or action montages

- More than 6 beats becomes difficult to maintain coherence across

Step 3: Write Each Beat Prompt

For each beat, write the prompt as if it's a standalone generation - describing subject, action, camera, and environment. The model handles transitions between beats; you handle what each beat shows.

Example sequence (brand story, 20 seconds):

Beat 1 (0-5s):

Professional woman in her 40s, dark blazer, confident expression.

Sitting at a desk reviewing documents in a quiet, well-lit office.

Static medium shot. Early morning, warm directional light from window.

Beat 2 (5-12s):

Same woman now standing at a floor-to-ceiling window overlooking a city.

Looking out thoughtfully. Slow camera push toward her from behind.

Late afternoon, golden light, city bokeh in background.

Beat 3 (12-20s):

Same woman in a meeting room, presenting to a small group.

Engaged, gesturing toward a screen. Camera is wide, capturing the room.

Same warm office lighting. Close on her face for final 2 seconds.

Step 4: Add Global Consistency Instructions

Storyboard mode includes a "Global Instructions" field that applies to all beats. Use it for:

- Character anchoring: "Subject is [physical description] throughout. Maintain consistent appearance."

- Visual style: "Photorealistic, cinematic color grade throughout. Warm tones, shallow depth of field."

- Technical specs: "All shots: 1080p, widescreen 16:9, no watermarks."

Step 5: Generate and Review

Sora 2 generates the complete sequence as a single continuous video. Review:

- Does the character look consistent across beats?

- Are the transitions between beats smooth?

- Does the overall visual style hold throughout?

Step 6: Iterate by Beat

If one beat is off, you can regenerate that specific beat without redoing the full sequence. Edit the prompt for the problematic beat and regenerate it. This is one of Storyboard mode's most useful production features.

Common Storyboard Mistakes

Too many scene changes in short duration: A 10-second video with 5 beats is 2 seconds per beat - not enough for any meaningful motion. Map your beat count to video length rationally.

Inconsistent character description across beats: If Beat 1 says "dark blazer" and Beat 3 says nothing about clothing, the model may drift. Keep character description consistent across all beats or use Global Instructions to anchor it.

Ignoring Global Instructions: The Global Instructions field exists specifically to solve cross-beat consistency. Use it for character, visual style, and technical specs - don't leave it empty.

Character Consistency: How to Keep Your Subject Looking the Same

Character consistency is one of Sora 2's genuine technical strengths. Used correctly, it allows you to anchor a specific person's appearance across multiple generations - building a visual library of consistent shots featuring the same subject.

Uploading a Character Reference

In the Sora 2 interface, locate the reference image upload option (available in both standard and Storyboard modes). Upload a high-quality photo of your subject:

- Minimum 1080p resolution

- Clean background preferred (the model reads the subject, not the background)

- Neutral expression for versatility (the model generates expressions from your prompt, anchored to the reference face)

- Multiple angles if available - front-facing and 3/4 angle give the model more visual anchors

The uploaded image becomes the face/appearance anchor for the generation. Your prompt describes what the character does and where they are; the reference defines who they look like. For more control, see image reference upload guide.

Best Practices for Character References

Use consistent references across a project. If you're producing a multi-clip series featuring the same subject, use the same reference image for all generations. Even small differences in the reference photo (different lighting, slight expression change) can introduce drift.

Describe the reference explicitly in the prompt. Don't rely solely on the uploaded image - reinforce it with text. "The subject from the reference image - same facial features, same [hair description], same [any distinctive features]."

Test character anchoring before full production. Generate 3-4 test clips across different scene contexts (indoor, outdoor, close-up, wide) with your reference before producing final deliverables. Identify where the character drifts and adjust prompts to compensate.

Limitations

Character references work best for human subjects. Non-human characters, abstract subjects, and highly stylized content have more variable consistency results. For brand mascots or custom characters without a photographic reference, consistency requires more prompt precision and more generation iterations.

Prompting Best Practices for Sora 2

Sora 2 handles complex, detailed prompts more reliably than earlier generation models. More specificity consistently produces better results. For a full prompt library, see Sora 2 Prompts.

The Core Prompt Structure

Subject → Action → Camera → Environment → Style

Subject: Who or what is in the frame. Physical description, not just category.

- Not: "A chef"

- Yes: "A chef in his 50s, salt-and-pepper beard, white apron, focused expression"

Action: What is happening. Describe motion, not state.

- Not: "Cooking"

- Yes: "Precisely slicing vegetables with practiced speed, slight lean forward"

Camera: How the shot is framed and whether/how it moves.

- Not: (omitted)

- Yes: "Close-up on hands and knife, static - then slow pull back to reveal face at medium distance"

Environment: Where the scene is. Specific details, not just location type.

- Not: "A kitchen"

- Yes: "Professional restaurant kitchen, stainless surfaces, warm task lighting above prep station, slight steam from pots in background"

Style: The aesthetic treatment.

- Not: "Cinematic"

- Yes: "Documentary-style, natural handheld camera energy, warm color grade, slight film grain"

Camera Language That Works

Sora 2 responds accurately to industry-standard camera terminology:

- Dolly push-in / dolly pull-out - camera moves toward or away from subject

- Pan left/right - camera rotates horizontally

- Tilt up/down - camera rotates vertically

- Tracking shot - camera follows subject movement

- Arc / orbit - camera circles the subject

- Crane up / crane down - camera rises or descends

- Handheld / Steadicam - motion style, not direction

Use these terms precisely. "Camera slowly dolly push-in" produces a distinct and accurate camera behavior. "Camera moves closer" is less precisely interpreted.

Lighting Descriptions That Produce Accurate Results

- "Golden hour backlight" - warm, directional, subject slightly against light

- "Soft box studio" - even, controlled, shadowless

- "Rembrandt lighting" - one-side illumination with characteristic triangle shadow

- "High-key commercial" - bright, even, minimal shadows

- "Low-key dramatic" - high contrast, deep shadows

- "Practical lighting" - motivated by in-scene light sources

Named lighting setups consistently outperform descriptive phrases.

Audio Generation in Sora 2

Sora 2 generates audio natively as part of the video generation process - ambient sound, music, and speech/lip-sync are produced alongside the visual output rather than added in post.

How to Enable and Configure Audio

In the generation settings, audio generation is toggled separately from video. Options:

Auto audio: The model generates audio that fits the visual content and any audio-relevant keywords in the prompt. A beach scene generates ocean sounds. An office scene generates keyboard clicks and ambient office hum. Music generation is influenced by mood keywords: "melancholy," "energetic," "tense."

Prompted audio: Include audio description in your prompt for more directional control:

Background: soft acoustic guitar, low volume, warm and contemplative tone.

Ambient: coffee shop background noise, distant conversation, occasional cup sounds.

No voiceover.

Lip-sync: If characters in the video are speaking, describe the dialogue direction:

Character speaks to camera: a few sentences, encouraging tone.

Warm, direct eye contact with camera. No specific dialogue - natural speaking movement.

Limitations

Sora 2's lip-sync is competent for general-purpose use but not frame-precise. For content requiring exact speech-to-lip synchronization (pre-recorded voiceover matched to lip movement), Seedance 2.0's @Audio1 reference system provides more reliable sync control.

Music generation is mood-directional, not genre-specific. "Jazz" will produce jazz-adjacent audio; matching a specific BPM or reproducing a specific musical style precisely is not reliable.

Remix and Blend

Remix

Remix allows you to take a completed generation and modify it by rewriting part of the prompt - keeping the visual foundation while changing specific elements.

How it works:

- Generate a video

- Select "Remix" on the output

- Rewrite the part of the prompt you want to change

- The model regenerates with the change applied while preserving the original's visual continuity

Best uses for Remix:

- Changing the time of day in an established scene

- Modifying the character's action while keeping the environment consistent

- Adjusting the camera motion after confirming the subject is correct

Blend

Blend merges two separate generations into a single output - combining visual elements, transitions, or stylistic qualities from two different clips.

How it works:

- Generate two separate videos

- Select both and choose "Blend"

- The model merges them, with controls for how much each contributes to the blend

Best uses for Blend:

- Combining a cinematic-style generation with a product-focused generation to produce a commercial hybrid

- Merging two character generations to create a composite appearance

- Transitioning between two scene types with a blended midpoint

Frequently Asked Questions

What is Sora 2? Sora 2 is OpenAI's second-generation AI video model, released December 2025. It generates video up to 60 seconds long (on Sora Pro) with character-consistent subjects, native audio, and a Storyboard mode for multi-scene production. It's widely considered the benchmark for cinematic narrative AI video in 2026. See the best AI video generator 2026 comparison.

How much does Sora 2 cost? ChatGPT Plus ($20/mo) includes rate-limited Sora 2 access at 720p with watermarks. ChatGPT Pro ($200/mo) includes full production access at 1080p, no watermarks. Via platform aggregators like Cliprise, Sora 2 access is available from $9.99/mo - same model, same output quality.

How do I use Sora 2 for free? Free access is available in limited form on the ChatGPT free tier in some regions - very restricted (low resolution, short duration, watermarked). For production use, free access is not viable. See our complete guide on using Sora 2 for free.

What is the maximum video length on Sora 2? 60 seconds on Sora Pro (ChatGPT Pro, $200/mo). 10 seconds on ChatGPT Plus ($20/mo). For longer sequences, Storyboard mode allows you to chain multiple beats into a longer continuous video.

How do I maintain character consistency across generations? Upload a character reference image in the Sora 2 interface. This anchors the subject's appearance across the generation. Reinforce with explicit character description in every prompt, and use Storyboard mode's Global Instructions field for cross-beat consistency.

What is Storyboard mode in Sora 2? Storyboard mode allows you to define multiple distinct "beats" - moments in the video with different prompts - and generate a cohesive sequence that transitions between them with maintained visual consistency. It's the closest thing AI video generation currently has to actual scene direction.

Where can I access Sora 2? Via ChatGPT at openai.com (ChatGPT Plus or Pro subscription), or via Cliprise as part of a multi-model subscription starting at $9.99/mo. Cliprise provides the same model access without regional restrictions and at a lower monthly cost.

Conclusion

Sora 2 is not just the most visually impressive AI video model of 2026 - it's a production tool when used correctly. Storyboard mode enables genuine narrative sequencing. Character reference anchoring enables consistent subjects across a project. The prompting structure and camera language vocabulary extract the model's full capability rather than its average output.

The access path matters. At $200/mo direct, Sora 2 is expensive for the majority of creators. At $9.99/mo via Cliprise alongside Kling 3.0, Veo 3.1, and 44 other models, it's the right model for the right brief at the right price.

Start using Sora 2 on Cliprise → cliprise.app/pricing

Related Articles: