Introduction

Part of the AI content creation series. For the complete guide, see AI Content Creation: Complete Guide 2026.

Manual AI content generation often turns high-volume creators into repetitive task handlers, spending considerable time on logins, prompt adjustments, and asset retrieval just for batches of images or videos. In areas like e-commerce product visuals or social media content, this friction builds up, where delays in processing or mismatched model settings can disrupt planned outputs.

This challenge grows as content needs increase-agencies managing client feedback, freelancers producing thumbnails, or brands preparing campaigns all encounter similar obstacles: workflows designed for individual uses rather than streamlined operations. Platforms like Cliprise help by bringing together various AI models into a single access point, but the key advancement comes when users shift from web interfaces to programmatic integrations where available. These allow automated processes for model choice, task submission, and result handling without manual steps.

What follows is a detailed guide based on common industry practices, exploring how such integrations can turn occasional generations into consistent workflows. Readers will learn about frequent challenges in initial configurations, essential ideas like asynchronous processing that influence efficiency, and structured approaches that improve reliability. Aggregators such as Cliprise provide access to models including Veo variants, Sora versions, and Flux options through higher-tier features where applicable, but effective use depends on grasping request formats, common issues, and process management.

Why focus on this area? The expansion of AI models-over 47 options from providers like Google DeepMind, OpenAI, and Kling-can lead to decision overload without automation. Switching tools manually disrupts concentration and increases time spent on adjustments. Insights from creator discussions indicate that automated approaches often streamline iteration processes, especially for video continuations where maintaining prompt alignment across models presents difficulties. Without this, large-scale work remains hands-on; with it, workflows adapt to growing needs.

Picture a mid-sized agency preparing dozens of product videos each week. Without integration, each one involves web access, model review, and progress monitoring-taking significant daily time. With a scripted approach, a routine can handle submissions, check statuses, and gather results, freeing time for creative adjustments. Solutions like Cliprise support this by organizing endpoints for image creation, video production, and modifications where offered, though general best practices apply: emphasize asynchronous management and model-tailored settings from the outset.

This guide breaks down preparation steps, common hurdles, and strategies for expansion, informed by patterns in Python and Node.js setups. It differentiates solo creator flows from team operations, points out scenarios where integrations fall short, and examines trends in combining multiple models. By the end, readers gain a framework for robust configurations, applicable to ecosystems like Cliprise or comparable services. The importance lies in handling peak processing demands, where unprepared setups risk delays; well-prepared ones keep operations smooth.

Beyond fundamentals, the discussion covers resource management, processing variations, and contingency plans seen in diverse provider environments. For example, when platforms like Cliprise combine access across Veo 3.1, Kling 2.5, and ElevenLabs TTS under a shared system, integrations need to consider differing resource use and processing times-a detail often missed in manual flows. This draws from shared experiences where unmanaged waits extended from short periods to much longer ones. Understanding these enables group processing for uses like presentation materials or option testing, where accuracy over multiple models counts.

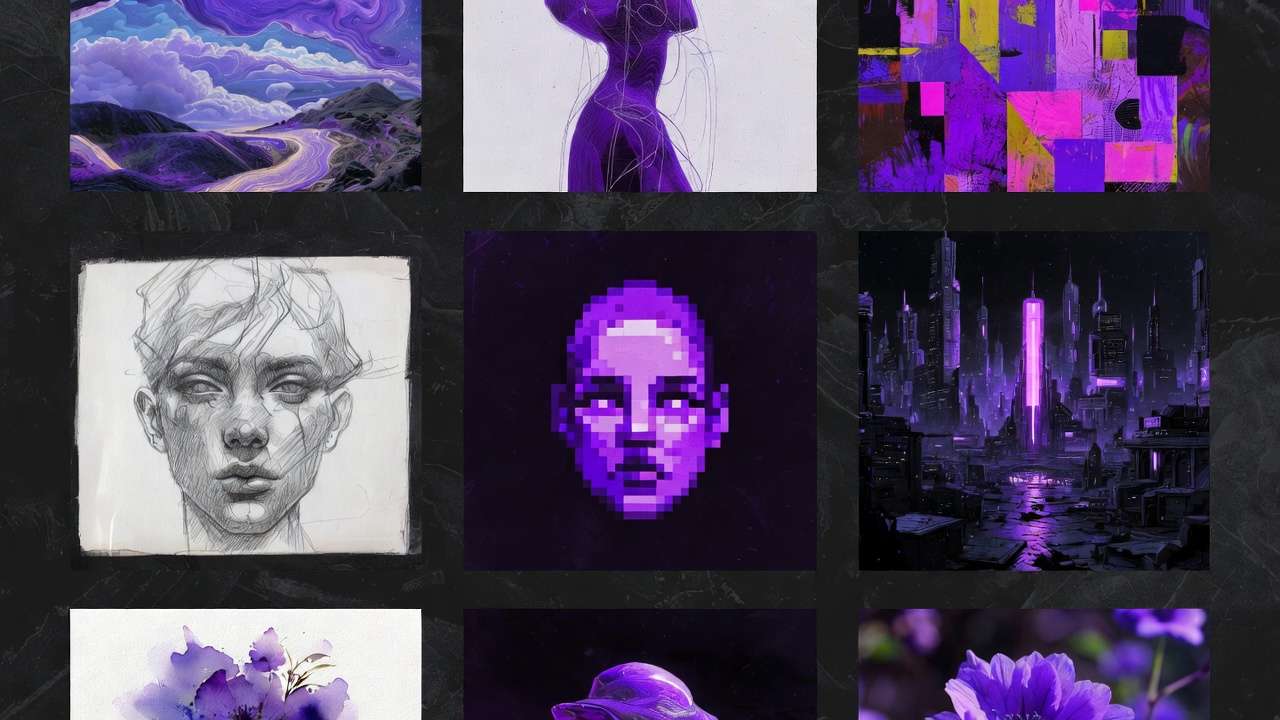

Expanding further, consider the broader context: content creators across industries-from marketing teams generating campaign assets to educators producing visual aids-face escalating demands for diverse media types. Images for static posts, videos for dynamic engagement, and audio for voiceovers all benefit from models specialized by providers such as Runway, Luma, or Topaz. Aggregators streamline discovery, as seen on model indexes like those on Cliprise with 26 categorized pages detailing specifications and applications. Yet, manual navigation through these options fragments productivity, particularly when testing prompts across Imagen, Midjourney, or Ideogram variants. Integration shifts this to scripted selection, allowing parameters like aspect ratios or seeds to propagate consistently.

Insights from industry observations highlight how such shifts reduce context-switching costs. A freelancer iterating on thumbnails might refine a Flux prompt once, then apply it to batches; an agency could chain image prototypes to video extensions using Sora or Hailuo. Platforms facilitating this, including Cliprise, expose model details that inform parameter choices-negative prompts for refinement, CFG scales for adherence. The result? Workflows that mirror production pipelines, from prompt enhancement to final export, without the drag of browser-based loops.

Prerequisites: What You'll Need Before Starting

Configuring programmatic access for AI generation requires basics in coding and account oversight, but many skip details that avoid early setbacks. Knowledge of languages like Python or Node.js is key, as they manage web requests, data parsing, and waiting loops typical in generation services. Lacking practice with tools like requests in Python or axios in Node.js turns payload checks into trial-and-error.

Account needs focus on suitable subscriptions; some platforms limit programmatic features to advanced levels like business or enterprise, reserving them for dedicated users. Check your account overview first-basic plans often steer toward web tools. This pattern holds across services: model availability links to plan types, with video-focused options like Sora 2 or Kling requiring elevated access.

Next come utilities. Tools like Postman or Insomnia speed up endpoint checks, validating inputs without complete programs. An editor such as VS Code with REST extensions aids quick changes. Store credentials in environment variables to keep them safe-avoid embedding directly. For testing, set up libraries: pip install requests asyncio in Python, or npm i axios node-fetch in JavaScript. Analytics setups like Firebase, noted in some apps from providers including Cliprise, suggest solid backends, but prioritize your local environment.

Setup time runs about 10-15 minutes for essentials: credential creation, initial test request, result handling. This lengthens if confirming eligibility, a common step where web use is mistaken for full access. Prepare with .env files for secrets, gitignore protections, and isolated environments. Users on platforms like Cliprise often begin at their web apps, reviewing model lists for Veo or Flux to plan integrations.

This order prevents issues like authentication failures from poor preparation. New users gain from testing collections that simulate full flows-submit input, check progress, fetch output. More experienced ones add tracking; advanced users incorporate notifications. For those avoiding code, no-code options like Zapier serve as bridges, but full control needs scripting. Services differ: some like Cliprise use shared resource pools for easier multi-model handling, others need separate credentials.

Build readiness with simulations: use sample responses for image or video results. Verify connection stability, as interruptions affect waits. Checklist: credentials valid? Documentation scanned? Basic structure in place? This stage, while straightforward, sidesteps many common configuration drop-offs noted in shared experiences.

To deepen preparation, consider scripting environments in detail. Python's asyncio shines for concurrent polls, handling multiple jobs without blocking; Node.js async/await offers similar fluidity for JavaScript stacks. Environment managers like pipenv or nvm ensure consistency across machines. For security, rotate credentials periodically and monitor usage patterns-platforms track calls to prevent abuse. In multi-model setups like those on Cliprise, where categories span VideoGen (Veo 3.1 variants), ImageGen (Flux 2, Imagen 4), and Voice (ElevenLabs TTS), pre-mapping models to use cases saves time: video for motion content, images for static visuals, edits for refinements.

Testing hierarchies matter: start with lightweight image requests before video, as they process quicker and reveal auth issues early. Community patterns show this reduces frustration-many report smoother ramps by prioritizing simple successes. Integrate logging from day one: Python's logging module or Node's Winston captures payloads, responses, and timings for post-analysis. Finally, document your stack: a README with env vars, deps, and flow diagrams turns one-off tests into reusable templates.

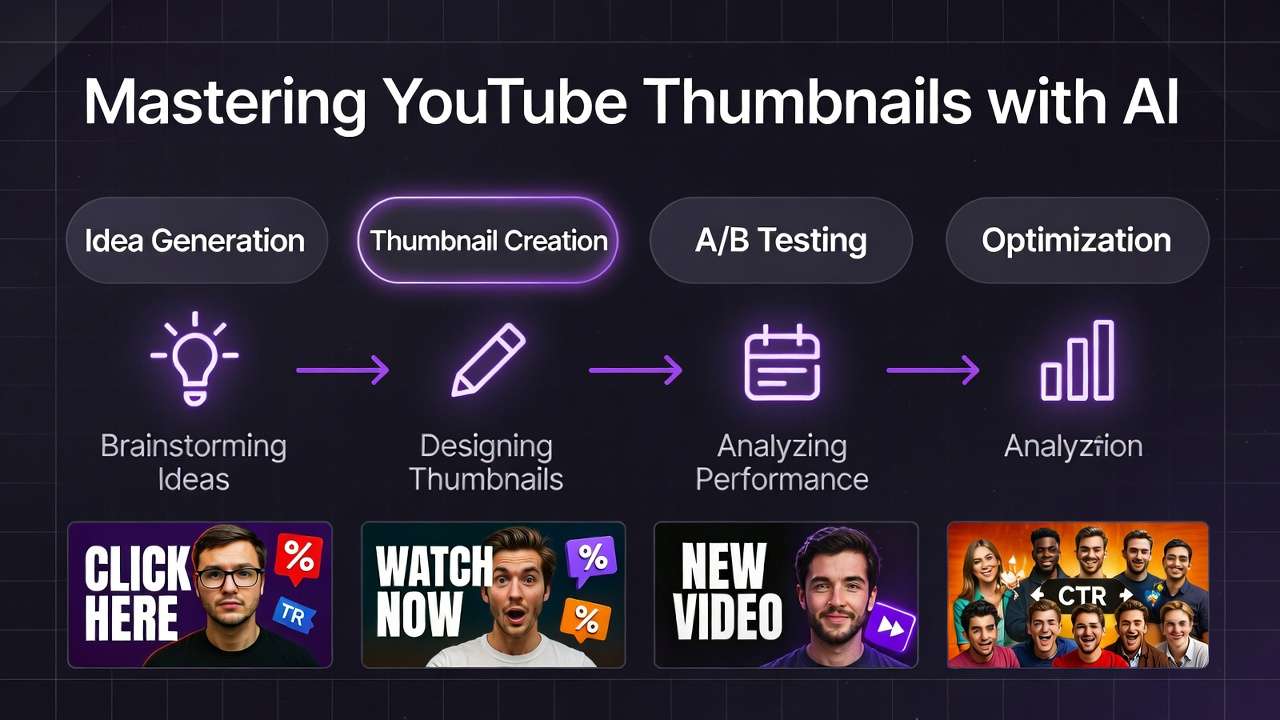

What Most Creators Get Wrong About API Integration for AI Generation

Many creators approach programmatic AI access expecting the ease of web interfaces, but overlooking differences in request management and status tracking leads to interrupted batches. Web requests include automatic retries; programmatic ones stop on overload signals unless delay strategies are built in. For thumbnail image sets, too many simultaneous calls can hit processing caps, as seen in experiences with Flux on aggregators like Cliprise, causing extended waits.

Asynchronous processing catches others off guard: submissions provide identifiers, not immediate results. Web users click and wait visually; integrations require status checks, but constant polling without pauses wastes resources and risks restrictions. Batch scenarios for Sora videos highlight this: unmanaged lines place tasks at the end, with plan differences extending times considerably. Aggregators combine models-Veo 3.1 Fast with Kling-but skipping this leads to pipeline breaks.

Parameter choices mark another frequent oversight: web prompts miss details like seeds, ratios, or adherence scales specific to programmatic use. A duration mismatch on Imagen 4 squanders attempts; e-commerce variants often see discards from conflicting refinements. Services like Cliprise outline these per model-Flux Pro benefits from seeds for consistency-yet users apply broad approaches, producing varying results across Hailuo or Runway.

Handling errors reveals further gaps: without recovery, one server issue topples the process. Real-world cases like agency content during busy periods show resource shortfalls mid-run-credential updates overlooked. Detail: resource validity differs; some require refreshes at intervals, ignored in extended operations. Novices write straight sequences; others add error blocks; pros use unique identifiers.

These issues linger because guides focus on isolated requests, not full chains. Variations by model appear-videos like Wan 2.5 take longer than images. In Cliprise-like systems, shared resources hide individual costs, but guides emphasize parameter matching. Solution: test disruptions beforehand. Freelancers feel this most without oversight; teams add safeguards. Key insight: programmatic tools highlight prompt weaknesses quicker than interfaces, demanding precision.

Rooted in interface habits-web tolerates looseness; code enforces structure. Observed improvements: ordered testing lowers issues noticeably. Factors like regional rules add complexity, but essentials: design for resilience over reaction.

Delving deeper, prompt engineering mismatches amplify in scale. A web-refined prompt for Midjourney images may underperform in Kling video without negative elements tuned for motion artifacts. Industry shares reveal creators iterating 2-3 times more in programmatic setups initially, but converging faster with logging. Model-specific quirks-Ideogram's character focus vs. Recraft's background tools-require per-call adjustments. Aggregators mitigate via unified lists, as on Cliprise's /models pages, but integration demands translating specs to payloads.

Auth flows trip many: bearer tokens vs. query params, scopes for read/write. Free plans often omit these, pushing upgrades. Peak-hour surges compound: video models queue deeper. Fallbacks-switching Veo Fast to Kling Turbo-preserve momentum. Monitoring tools like Sentry catch silent fails, turning guesswork to data.

Core Concepts: Understanding AI Generation APIs

Programmatic AI services center on interfaces for image, video, and modification tasks, with inputs shaping results like descriptions, seeds for consistency, ratios such as 16:9, and lengths like 5s or 10s for video. Aggregators standardize-Cliprise directs Veo 3, Sora 2, Kling to generation paths-easing multi-model use over separate services.

Shared resource pools combine expenses; one balance spans low-cost Flux images to high-use Veo Quality videos, as structured in service data. Calls deduct after completion, with upfront estimates. Outputs follow patterns: identifier on start, status queries for updates (in line/processing/complete), then links. Differences: images via data or URLs; videos with details like frames.

Common structures in inputs: structured data with model choice like "veo-3.1-fast", description, seed value, refinements. Adherence scale (1-20) guides fidelity; higher values may introduce flaws. Asynchronous design prevails: heavy tasks run in background, with check intervals of 5-30s recommended.

Mental Model: Pipeline as Queue Factory

Think of services as production lines: send design (input), receive ticket (identifier), monitor progress (query), collect item (download). Crucial because interface waits hide reality; capacity ties to plan-based slots.

Scenarios: Logo images-description plus ratio for PNG output. Reel videos-extend from image, check over minutes. Modifications: Background removal on input, fast results.

Platforms like Cliprise list 47+ models with details; programmatic access aligns, noting toggles. Outputs flag issues: invalid input, low resources.

Observed Variations

Inputs vary: Imagen prefers style options; ElevenLabs TTS uses voice selectors. Unified services smooth this, but verify guides. Recovery: increasing delays (1s, then double).

This base expands: groups via repeats, oversight via records. Basics cover interfaces; next level inputs; advanced coordination.

To illustrate, consider image generation flow: select Flux 2 Pro for photorealism, input seed 12345 for variant control, 1:1 ratio for icons. Response phases: accept (job_id), queued (position info), processing (progress bar equivalent), done (URL with metadata like resolution). Video parallels but extends: Kling 2.5 Turbo for quick 5s clips, negative prompts excluding distortions. Edit paths for Qwen or Ideogram apply masks to uploads, yielding refined versions.

Resource dynamics: post-generation deductions prevent overspend, with balances queryable. Errors standardize-402 for shortfalls prompts plan review. Platforms aggregate for efficiency: one auth covers Google Imagen 4 Standard/Fast/Ultra to Black Forest Labs Flux variants. Insights from deployments show param consistency cuts reworks; mismatched CFG on Midjourney yields artistic drifts unsuitable for brands.

Step-by-Step: Setting Up Your First Programmatic Integration

Step 1: Obtain and Secure Your Credentials

Visit dashboards on eligible platforms-higher plans unlock features-locate access section, create key. Store in .env: API_KEY=sk-xxx equivalent. Avoid exposure via git; use ignore files. Invalid? Confirm level; basics restrict. Quick foundational step.

Step 2: Explore Documentation and Interfaces

Scan guides or model lists: Veo/Sora for motion, Flux/Imagen for visuals. Details: lengths 5-15s, seeds for repeats.

| Category | Key Models | Providers | Supported Features |

|---|---|---|---|

| VideoGen | Veo 3, Veo 3.1 Quality, Veo 3.1 Fast, Sora 2, Kling 2.5 Turbo | Google DeepMind, OpenAI, Kuaishou | Prompt text, aspect ratio, duration options (5s/10s/15s), seed, negative prompts, CFG scale |

| ImageGen | Flux 2 Pro, Midjourney, Imagen 4 Standard, Seedream 4.0 | Black Forest Labs, Midjourney, Google, Various | Photorealism, style transfer, high-res outputs, reproducibility via seed |

| VideoEdit | Runway Aleph, Luma Modify, Topaz Video Upscaler | Runway, Luma, Topaz | Extension, modification, upscaling to 8K scenarios |

| ImageEdit | Qwen Edit, Ideogram V3, Recraft Remove BG | Alibaba, Ideogram, Recraft | Masking, background removal, character focus |

| Voice | ElevenLabs TTS | ElevenLabs | Text-to-speech, sound effects, stability controls |

Note patterns: motion tasks involve longer waits than static. Unified paths use model selectors.

Step 3: Make Your First Test Request

Use command-line tools with headers for auth, structured input like model and description to sample endpoint. Testing apps replicate. Overload signal? Implement delays doubling per try. Handle structured output. Milestone yielding link.

Step 3 Expansion: Building the Request Logic

Craft inputs carefully: JSON with required fields-model from list (e.g., Flux for images), core prompt describing scene, optional seed for control. Headers carry auth. Responses detail status or identifier. Tools like curl prototype; libraries handle in code. Common: validate schema pre-send to catch format errors. Platforms provide examples mirroring web params-aspect for composition, negatives for exclusions. Success: parse URL, download asset. Failures: log codes (400 input, 401 auth) for debug.

Step 4: Handle Asynchronous Generation Jobs

Start task → query progress via identifier at intervals. Phases: in queue, active, complete. Use timed pauses to avoid overload. Plan differences affect capacity.

Detail: Loops check endpoint with job reference, extract state. Python asyncio or JS promises manage multiples. Timeouts prevent hangs-abort after hours. Platforms signal position or estimates. Downloads follow: direct links for images, chunked for videos.

Step 5: Integrate into Your Workflow (e.g., Python/Node.js Script)

In Python, import request handlers and async tools, load credential, set headers, form input with model like Veo 3.1 Fast, description, length. Send to generation path, capture identifier. Loop: query status endpoint, pause 10s if pending, output link on finish. Extend to groups: array of inputs, concurrent limits. Node.js mirrors with promises for non-blocking.

Pseudocode elaboration: Enclose in functions-submit(job), poll(job, max_tries), download(url). Error branches: retry on transients, log permanents. Batch wrapper: for each prompt, launch gather. Logs timestamp events. Adapt for edits: upload image first, then modify prompt.

Real-World Comparisons: API Workflows Across Creator Types

Freelancers favor quick image sets-Flux for variants-while agencies manage video chains for projects, Sora after image starts. Solos prototype manually, automate successes. Static suits images; motion videos.

| Criteria | Freelancer (Image Focus) | Agency (Video Batch) | Solo Creator (Hybrid) |

|---|---|---|---|

| Use Case Fit | Social thumbnails, product visuals; daily consistent outputs | Client campaigns; multi-model revisions across dozens weekly | Personal content; test concepts, expand promising ones |

| Workflow Speed | Quick per asset after initial setup; efficient grouping via repeats | Moderate per video including checks; parallel handling streamlines larger sets | Balanced per test; transitions between types add steps |

| Scalability Notes | Reaches plan capacities on higher volumes; manual fallback for surges | Manages substantial weekly volumes with safeguards; waits extend in peaks | Comfortable for moderate daily use; excess shifts manual |

| Learning Curve | Few hours to initial groups; refinement over days | Weeks for complete chains; team integration additional days | Hours for basics; combined logic additional time |

| Common Issues | Input mismatches lead to reworks; variation without seeds | Processing delays affect portions; resource shortfalls mid-run | Type switches create overhead; style inconsistencies across models |

| Output Control | Strong with seeds and ratios; consistent variants | Balanced-motion variability; scales help refine | Ranges; static precise, motion often needs repeats |

Table illustrates: freelancers prioritize speed; agencies volume. Hybrids balance risks.

Case 1: Social planning-freelancer generates Instagram visuals weekly, Flux plus refinement, major time recovery vs manual. Cliprise example: model changes fluid.

Case 2: Product variants-agency chains Sora motion from Imagen starts, quicker feedback.

Case 3: Presentations-solo tests Kling motion, derives stills.

Discussions show teams ahead in use, solos refine inputs first. Freelancers value simplicity; agencies robustness. Patterns: image prototyping precedes video scaling, cutting ineffective spends.

Order and Sequencing: Why Setup Sequence Impacts Success

Tackling complex motion before static tests misses efficient prototyping-prompts often need motion tweaks after basics. Users leap to Sora, overspending; order auth→image→group→video lowers issues per patterns.

Attention load rises in motion: shifts from input to wait to adjust fragment efforts, extending time notably. Static builds familiarity; motion sustains focus.

Static to motion for visual continuity (thumbnails to clips); motion to static for key frames. Cliprise patterns: Flux to Veo.

Insights: Ordered approaches show fewer reworks; unordered more adjustments.

Expand: Phase 1 auth/image confirms connectivity. Phase 2 groups test capacity. Phase 3 video validates length/seed. Logs track: 80% issues in early phases caught cheap. Freelancers sequence daily; agencies pipeline stages.

When API Integration Doesn't Help (and What to Do Instead)

Occasional users (few outputs weekly) see setup outweigh benefits; web interfaces suffice. Unreliable connections disrupt checks, building delays.

Non-coders encounter barriers; no-code bridges like Make.com better. Surges fill capacities quickly.

Limits: Input differences across models (Veo vs Kling lengths); no instant for live. Connections, non-devs prefer interfaces.

Alternatives: Web tools like Cliprise for occasional use.

Deepen: Hobby volumes stay manual-quick generations via browser. Internet drops kill polls; proxies unstable. Model quirks persist regardless. Peaks: popular times extend all. No-code maps inputs to outputs, Zapier chains. Web dashboards offer previews, queues visual.

Advanced Techniques: Scaling and Optimization

Batch Processing and Parallelism

Operate within capacities; concurrent handlers in languages. Switch models on issues.

Detail: Python gather for 5 slots, JS Promise.all. Inputs array, track identifiers. Outputs zip to folders.

Error Handling and Retries

Unique keys prevent duplicates. Delay increases with randomness.

Examples: 5xx retry 3x, 400 log/skip. Track attempts.

Monitoring and Logging

Notifications on complete; record errors.

Tools: Prometheus metrics, ELK stacks. Dashboards visualize queues.

Webhook sim: long-poll alternatives. Alerts on thresholds.

Industry Patterns and Future Directions in AI API Automation

Teams often lead adoption per discussions-multi-model unification grows. Trends: combined access like Cliprise.

Upcoming: progressive outputs, finer controls.

Modular scripts prepare: functions per task.

Observations: Python dominates solos, Node agencies. n8n workflows bridge. Aggregators rise for 47+ models.

Common Pitfalls and Troubleshooting Guide

Issue 1: Invalid inputs-review docs.

2: Credential expiry-refresh.

3: Result handling-parse structures.

Checklist: auth verify, interval set, records keep.

Expand pitfalls: Geo consent blocks (EU patterns). Payload size limits. Model toggles-check availability. Debug: verbose logs, mock servers.

Conclusion: Streamlining Your AI Workflow

Essentials: ordered tests, async management, safeguards. Advance: static prototype, group scale. Aggregators like Cliprise show multi-model potential. Explore neutrally.

To wrap deeply: Integrate learnings iteratively-log first runs, refine params from outputs. Communities share model tips: Veo for realism, Kling speed. Scale sustainably: monitor spends, archive successes. Future-proof: abstract model selectors for swaps. This positions workflows for evolving models-ByteDance Omni Human, Topaz 8K-keeping creators agile. Platforms evolve; adaptable code wins.

Related Articles

- AI Content Creation: Complete Guide 2026

- AI Prompt Engineering: Complete Guide 2026

- Multi-Model AI Platforms: Why Creators Are Ditching Single-Tool Subscriptions

- Zapier + Cliprise: Automate Your Content Creation Workflow

- Cost Optimization: Maximize Credits on Multi-Model Platforms

- AI Video Generation: Complete Guide 2026