Part of the AI for E-commerce: Complete Guide 2026 pillar series.

Early AI content creation centered on prompting single isolated models-describe desired output, generate, accept result or regenerate hoping for improvement. This experimental approach produced impressive demonstrations yet failed professional production requirements demanding consistency, format flexibility, multi-asset coordination, and systematic refinement capabilities.

Modern AI workflows evolved dramatically: multi-model orchestration combining specialized engines (VideoGen, ImageGen, editing tools, voice synthesis), systematic staging strategies (validation → generation → enhancement → integration), parameter discipline (seeds, CFG scales, aspect ratios), and reference passing maintaining consistency across production stages. This architectural transformation enables professional creators to scale from experiments to repeatable production systems.

This analysis traces AI content creation's evolution from single-model prompting through multi-model production workflows, examines systematic staging architecture replacing trial-and-error approaches, and establishes frameworks enabling sustainable professional-grade output velocity.

Evolution Stage 1: Single-Model Prompting Era

Characteristics (2022-early 2024):

- Individual specialized tools operating in isolation (Midjourney for images, separate video tools)

- Text-prompt-only generation without reference passing or seed control

- Manual asset management across disconnected tool ecosystems

- Trial-and-error iteration without systematic refinement frameworks

- Output: Impressive demonstrations, inconsistent production capability

Workflow Pattern:

- Craft prompt attempting to describe complete desired outcome

- Generate via single model hoping for acceptable result

- Regenerate with adjusted prompt if output fails expectations

- Repeat until acceptable or abandon due to frustration/deadlines

- Manually export/import assets between tools if combinations needed

Limitations Exposed:

- Stylistic drift across assets from different tools/sessions

- No reproducibility mechanism for "slight variation" requests

- Format adaptation required full regeneration losing creative progress

- Multi-asset projects exhibited visual inconsistency

- Professional production requirements exceeded single-tool capabilities

Creator Impact: Experimental hobby use flourished; professional adoption stalled due to workflow unreliability and output unpredictability.

Evolution Stage 2: Parameter Control Introduction

Advancement (mid 2024):

- Seed values enabling reproducible generation across attempts

- CFG scale adjustments balancing prompt adherence with creative interpretation

- Negative prompts preventing common artifact patterns proactively

- Aspect ratio and duration specifications matching platform requirements

- Output: Improved consistency, beginning of systematic workflow potential

New Capabilities Enabled:

- Reproducibility: Seed 12345 generates identical output enabling controlled variation testing

- Refinement: Adjust CFG or negatives maintaining seed for surgical improvements

- Series Production: Locked seeds across multi-asset projects maintaining visual brand identity

- Format Variants: Same seed across aspect ratios producing platform-specific derivatives consistently

Workflow Evolution:

- Generate baseline with documented seed establishing creative direction

- Test parameter variations (CFG, negatives) maintaining seed for refinement

- Increment seed systematically exploring controlled variation range

- Document successful seed-parameter combinations for future reproduction

Remaining Limitations:

- Still isolated single-model operations requiring manual coordination

- Cross-tool inconsistency when combining image and video outputs

- Limited strategic guidance on parameter optimization per use case

Creator Impact: Power users developing sophisticated single-model workflows; broader adoption still constrained by tool fragmentation.

Evolution Stage 3: Multi-Model Platform Aggregation

Transformation (late 2024-2025):

- Unified platforms aggregating 20-40 specialized models from multiple providers

- Seamless model switching within single interface eliminating export/import friction

- Reference passing enabling image-to-video workflows maintaining aesthetic continuity

- Parameter persistence (seeds, aspect ratios, CFG) across model transitions

- Output: Professional production workflows enabling systematic multi-asset creation

Architectural Advantages:

Specialized Model Access:

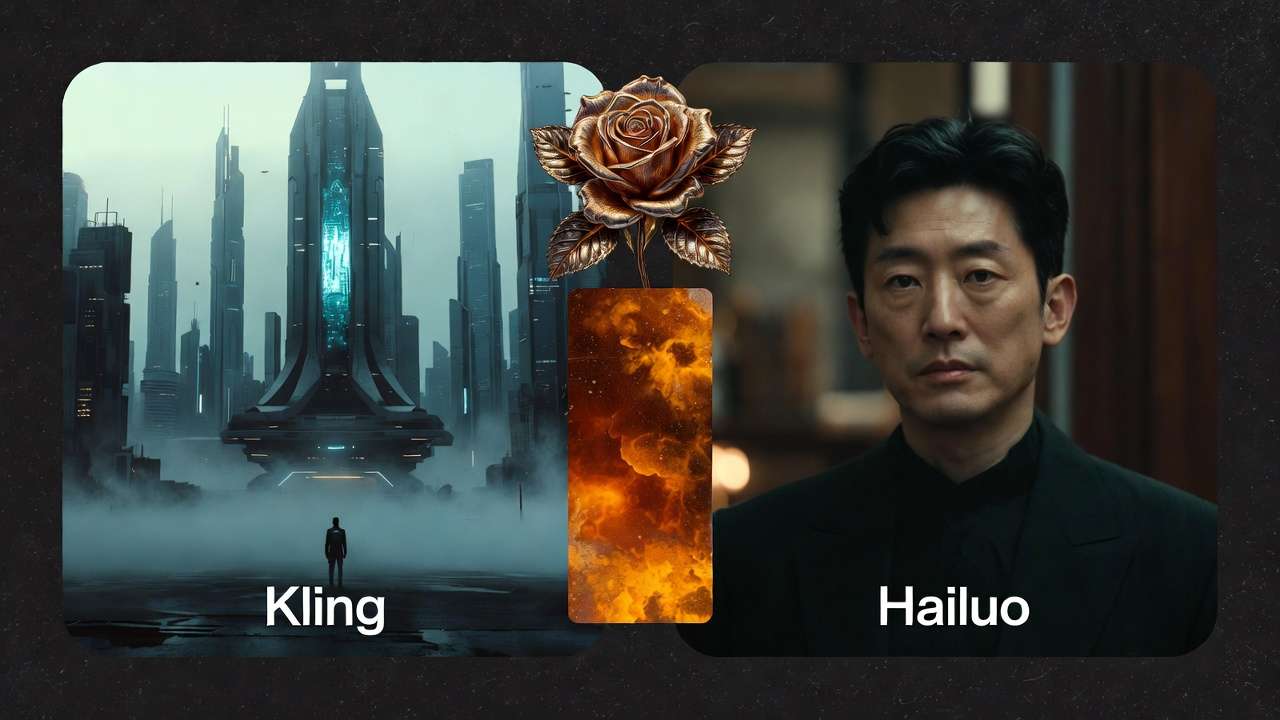

- VideoGen: Veo 3.1 (Fast/Quality), Sora 2, Kling 2.5 Turbo, Hailuo 02, Runway Gen4, Wan 2.5

- ImageGen: Flux 2, Midjourney, Google Imagen 4, Seedream variants, Ideogram

- VideoEdit: Runway Aleph, Luma Modify, Topaz Video Upscaler

- ImageEdit: Qwen Edit, Recraft Remove BG, Ideogram Character

- Voice: ElevenLabs TTS with emotion control

Workflow Integration:

- Generate image via Flux → Animate via Veo using image reference (maintains aesthetic)

- Fast prototyping via Kling Turbo → Quality regeneration via Veo Quality with locked seed

- Base generation → Targeted enhancement via Topaz/Luma (versus expensive regeneration)

- Video sequence → Voice synthesis integration matching established pacing

Parameter Continuity:

- Seeds persist across model switches enabling consistent multi-stage workflows

- Aspect ratios maintained through generation → enhancement pipelines

- Reference images pass seamlessly from ImageGen to VideoGen stages

- Centralized asset libraries with automatic metadata (models used, parameters, timestamps)

Creator Impact: Professional creators achieving 3-5x output velocity through systematic workflows; agency adoption accelerating through coordinated team production capabilities.

Modern Production Workflow Architecture

Stage 1: Strategic Model Selection

Match specialized engines to specific requirements rather than forcing universal tools:

- Static visuals → ImageGen (Flux, Imagen, Midjourney based on style requirements)

- Motion sequences → VideoGen (platform-appropriate selection: Kling for social, Sora for narrative, Veo for polish)

- Refinement needs → Edit tools (Topaz for resolution, Luma for scenes, Recraft for backgrounds)

- Audio requirements → Voice synthesis (ElevenLabs with tone matching)

Stage 2: Systematic Validation

Image-first workflows validate creative direction before expensive processing:

- Generate 10-15 image concepts via Flux/Imagen (15-20 minutes, minimal credit cost)

- Stakeholder/client review and selection (immediate visual feedback)

- Seed documentation of approved directions

- Animate validated concepts via appropriate VideoGen models (5-8 minutes per finalist)

Benefit: Compositional failures caught at image stage (seconds) versus video stage (minutes); 50-70% reduction in wasted processing.

Stage 3: Fast-to-Quality Pipeline

Strategic model variant allocation optimizes exploration and refinement:

- Exploration: 12-20 prototypes via fast models (Veo Fast, Kling Turbo) testing creative range

- Validation: Comparative review identifying top 2-3 strongest directions

- Quality: Regenerate validated winners via quality models (Veo Quality, Sora Pro) with locked seeds

- Enhancement: Optional post-production elevation (Topaz upscaling) versus quality regeneration

Efficiency: 3-5x exploration volume within fixed budgets through strategic allocation versus quality-first universal application.

Stage 4: Multi-Format Derivatives

Seed-based derivative production maintains campaign cohesion across platforms:

- Validated concept (seed 12345, 9:16 Instagram Reel)

- YouTube Shorts variant (seed 12345, 9:16, extended duration 45s)

- Feed post variant (seed 12345, 1:1 aspect, shortened 7s)

- LinkedIn variant (seed 12345, 16:9, professional 25s)

Consistency: Core aesthetic (lighting, colors, composition) maintained automatically through seed control; format specifications adapted systematically.

Stage 5: Targeted Enhancement

Strategic post-production rather than expensive regeneration:

- Resolution elevation: Topaz upscaling transforms fast-generated bases to 4K delivery standards

- Scene refinement: Luma Modify applies targeted adjustments without full regeneration

- Background optimization: Recraft cleanup improves composition without starting over

- Audio integration: ElevenLabs narration layered matching visual pacing

Economics: Enhancement workflows maintain fast-model efficiency advantages while achieving quality-model output standards through targeted refinement.

Professional Workflow Implementation

Prerequisites:

- Multi-model platform access aggregating specialized engines

- Parameter discipline (seed documentation, CFG optimization, negative prompt libraries)

- Systematic staging habits (validation → generation → enhancement sequencing)

- Performance tracking (output volume, regeneration rates, time-per-asset metrics)

Daily Production Routine:

Morning (60-90 minutes): Batch exploration phase

- Queue 12-20 concept generations across 2-3 active projects

- Fast models exclusively (Veo Fast, Kling Turbo, Flux rapid settings)

- Diverse creative testing without quality-model credit commitment

Mid-Morning (30-45 minutes): Validation and quality allocation

- Review exploration batch identifying strongest 4-6 directions

- Queue quality regenerations with locked seeds for validated concepts

- Document successful seed-prompt-model combinations

Afternoon (90-120 minutes): Enhancement and delivery

- Topaz upscaling of fast-generated assets meeting delivery standards

- Targeted Luma/Runway refinements addressing specific issues

- Voice integration and final packaging per platform specifications

- Client presentations and feedback incorporation

Output: 8-12 delivery-ready assets daily versus 2-3 via trial-and-error single-model approaches.

Sustainability Factors:

- Batch processing prevents micro-context-switching cognitive fatigue

- Fast prototyping maintains creative momentum versus extended quality-model waits

- Parameter libraries eliminate repetitive problem-solving overhead

- Systematic staging provides clear progress markers versus open-ended experimentation

Measurement and Optimization

Key Performance Metrics:

- Output Volume: Delivery-ready assets completed daily (target: 8-12 mature workflows)

- Regeneration Rate: Percentage of generations wasted (target: less than 20% with systematic approaches)

- Time Per Asset: Average production timeline from concept to delivery (target: 30-45 minutes)

- Client Revision Cycles: Feedback rounds before approval (target: 1-2 with validation staging)

Optimization Strategies:

- A/B test model selections documenting performance patterns per content type

- Refine parameter libraries based on success rates over time

- Adjust batch sizes optimizing parallel processing versus review overhead

- Update workflows incorporating new model releases and capability improvements

Growth Trajectory Expectations:

- Month 1: 2-4 assets daily via scattered experimentation

- Month 2: 5-7 assets daily implementing systematic multi-model workflows

- Month 3: 8-12 assets daily through optimized production architecture

- Month 4+: Sustained 10-15 assets daily; scalable through team coordination

Future Evolution Indicators

Emerging Capabilities:

- Advanced seed control enabling precise creative direction across models

- Improved reference passing maintaining fidelity through generation → enhancement pipelines

- Platform workflow automation (common staging patterns executed via templates)

- Collaborative features enabling team coordination across unified production systems

Professional Requirements Driving Development:

- Agency demands for coordinated multi-creator campaign production

- Brand consistency requirements across expanding asset libraries

- Format proliferation (emerging platforms, aspect ratio variations)

- Volume expectations increasing (daily social content, continuous campaign demands)

Related Articles

- chaining AI models effectively

- Professional Video Production on Cliprise

- Creating Ecommerce Campaigns with AI

- Efficiency with Batch AI Generation

Understanding AI content creation's evolution from experimental prompting to systematic production workflows enables professional-grade capacity. Master AI Workflows for Fashion Brand Photography: Why Generative Tools Are Upending Traditional Shoots-And Most Brands Are Still Chasing the Wrong Outputs building scalable systems that sustain output velocity meeting expanding creative demands consistently.