ElevenLabs TTS vs Text to Dialogue: Which AI Audio Model to Use

ElevenLabs has expanded its audio model lineup on Cliprise to include two distinct voice synthesis models: ElevenLabs Text to Speech (TTS) and ElevenLabs V3 Text to Dialogue. They share the same provider and the same underlying quality standard, but they are designed for fundamentally different production use cases-and using the wrong one for a task produces suboptimal results regardless of prompt quality.

This comparison explains the distinction clearly, covers when to use each, and provides a practical decision framework for audio production workflows on Cliprise.

The Core Distinction

ElevenLabs TTS is a single-speaker voice synthesis model. It converts text to speech in a single voice, with exceptional naturalness, emotional range, and clarity. It is designed for narration, voiceover, audiobooks, announcements, and any production context where one voice speaks continuously.

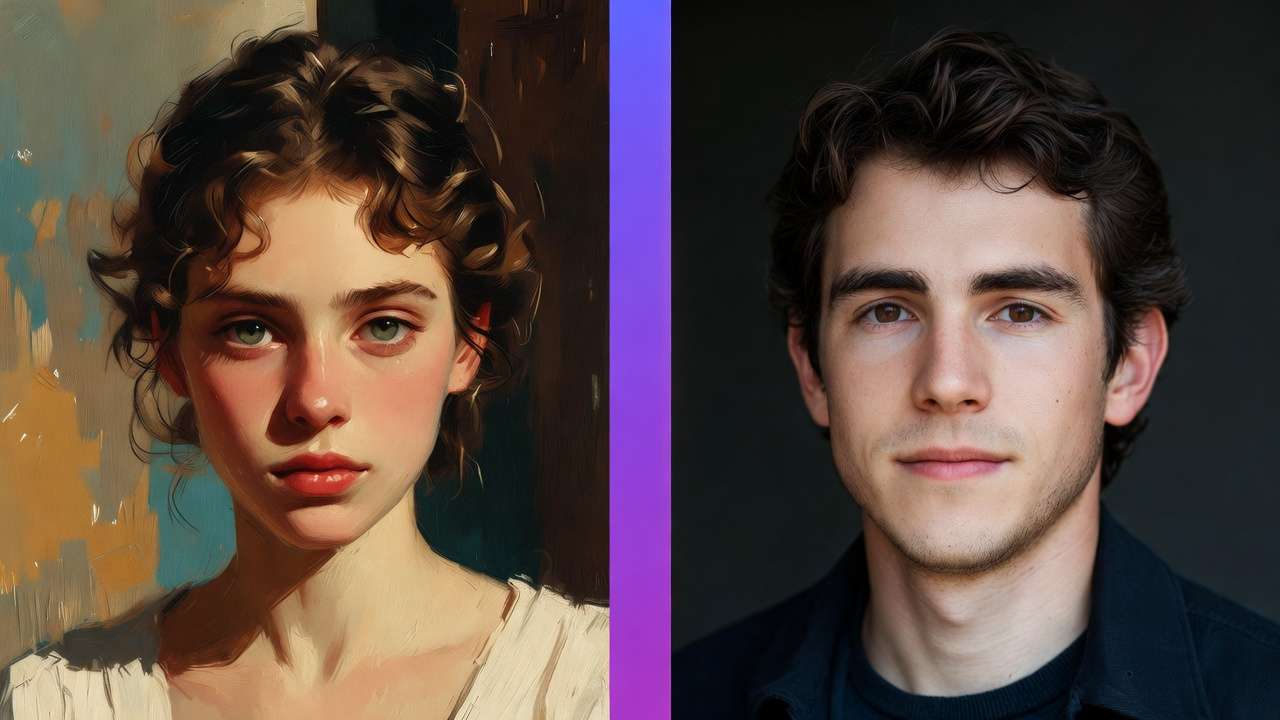

ElevenLabs V3 Text to Dialogue is a multi-speaker conversational audio model. It accepts structured dialogue scripts with speaker labels and generates a complete conversation output-multiple distinct voices in realistic conversational exchange, with natural turn-taking dynamics, appropriate prosody per speaker, and emotional congruence across the exchange. It is designed for dialogue, not narration.

These are not quality tiers of the same capability. They address structurally different audio production requirements.

ElevenLabs TTS: Strengths and Use Cases

ElevenLabs TTS produces some of the most natural single-speaker AI voice output available in 2026. Its core capabilities:

Voice quality and naturalness. The model applies nuanced prosody, natural breathing patterns, appropriate emphasis, and emotional coloring that distinguishes it from robotic TTS. Long-form narration maintains engagement and naturalness without the fatigue artifacts common in earlier AI voice models.

Voice library access. Full access to the ElevenLabs voice library-hundreds of distinct voice personas across ages, genders, and accents-enables production without custom voice cloning while maintaining professional quality.

Emotional range. Narration can be directed toward different emotional tones-authoritative, warm, urgent, conversational-by adjusting generation parameters alongside the text input.

Long-form stability. Voice consistency across extended outputs (10+ minutes of narration) is reliable, without pitch drift or character change over time.

Best use cases for ElevenLabs TTS:

- Podcast narration and solo commentary

- Audiobook production

- Video voiceover and narration tracks

- Corporate training narration (single presenter)

- Explainer video audio

- Product demo voiceover

- Advertisement read-alouds

- Any content where one voice speaks throughout

ElevenLabs V3 Text to Dialogue: Strengths and Use Cases

ElevenLabs V3 Text to Dialogue applies ElevenLabs' voice quality to an entirely different production problem: generating realistic conversation between multiple distinct speakers.

Multi-speaker coherence. The model maintains distinct, consistent voice characteristics for each labeled speaker throughout the entire output-no voice drift, no character bleed, no inconsistency across speaker turns.

Conversational prosody. Dialogue generation applies conversational speech patterns rather than narration patterns. Natural pauses between turns, appropriate response timing, interruptions where scripted, and emotional alignment between adjacent lines are all handled as part of generation.

Speaker dynamics. The model understands conversational flow-the rhythm of real dialogue is different from the rhythm of narration, and the model produces audio that sounds like conversation rather than two people alternating monologue segments.

Support for up to 6 speakers. Multi-party conversations-panel discussions, group scenes, ensemble casts-are generated coherently with up to six simultaneous speaker voices.

Best use cases for ElevenLabs V3 Text to Dialogue:

- Podcast interview simulation and scripted two-host shows

- Video game NPC dialogue systems (thousands of lines across characters)

- Corporate training scenarios (simulated customer conversations, role-play exercises)

- Audio drama and scripted fiction production

- Interactive fiction branching dialogue

- E-learning conversational scenarios

- Localization of dialogue-heavy content

- Any content requiring two or more distinct speakers in conversation

Technical Comparison

| Spec | ElevenLabs TTS | ElevenLabs V3 Text to Dialogue |

|---|---|---|

| Speaker count | 1 | 2-6 |

| Input format | Plain text | Speaker-labeled script |

| Conversational prosody | No | Yes |

| Turn-taking dynamics | No | Yes |

| Voice library support | Full | Full |

| Custom voice support | Yes | Yes |

| Max output length | Long-form (30+ min) | Up to 3 min per generation |

| Output format | WAV / MP3 | WAV / MP3 |

| Max audio quality | 44.1kHz | 44.1kHz |

When Each Model Fails at the Other's Job

Using TTS for dialogue: If you need two characters to converse, generating each line separately with TTS and stitching produces technically correct audio, but it loses conversational dynamics-the timing, rhythm, and emotional continuity that makes conversation feel natural. The result sounds like two monologues, not a conversation.

Using Dialogue for narration: Text to Dialogue requires speaker labels and is optimized for conversational content. For long-form continuous narration without speaker turns, TTS is the correct tool-it handles pacing, paragraph-level breathing, and sustained narrative delivery better.

Combining Both in a Single Production

Some content formats benefit from both models in a single production pipeline:

- Podcast episodes with a narrated intro/outro (TTS) and scripted conversation segments between hosts (Text to Dialogue)

- Training videos with a narrated overview (TTS) followed by simulated customer scenario dialogues (Text to Dialogue)

- Audiobooks with narrated prose (TTS) and dialogue passages generated as conversation (Text to Dialogue)

The Cliprise platform enables both models within a single credit system, making multi-model audio pipelines operationally straightforward.

Final Verdict

Use ElevenLabs TTS for any single-speaker narration, voiceover, or monologue-format audio content.

Use ElevenLabs V3 Text to Dialogue for any content requiring realistic conversation between two or more distinct speakers.

The models are not substitutes for each other-they solve different problems. Identifying which problem you are solving determines which model to use, with no exceptions.

Related News:

Related:

- ElevenLabs V3 Text to Dialogue complete guide →

- Kling AI Avatar API for text-to-talking-head video →

Explore all audio models at the Cliprise models hub.