When you're racing against a deadline and need five video variations for a client presentation tomorrow, the last thing you want is to discover that the "fast" model you chose actually spends half its time queued while cheaper alternatives deliver sooner. The ai video generator landscape is littered with marketing claims that don't match real-world performance-models labeled "turbo" that crawl during peak hours, free tiers that prioritize paid users into endless wait states, and render times that vary dramatically based on factors most tutorials never mention. The creators who consistently hit their deadlines aren't luckier than everyone else; they've done the systematic testing to understand which models actually deliver speed versus which ones just sound fast. This comprehensive speed test breaks down six leading models across queue times, render durations, and total cycle times, revealing the patterns that determine whether you'll ship on time or spend another night regenerating.

Those systematic runs are far easier when every candidate model lives behind one AI video generator interface, so queue behavior isn’t confounded by switching sites mid-test.

Experienced creators running production workflows across multiple AI models consistently observe that labeled "fast" variants, such as turbo editions, frequently encounter longer queues during standard usage periods than expected, shifting the effective speed ranking in ways that single-model tests miss. This pattern emerges particularly when platforms aggregate dozens of models, where server allocation and concurrency rules introduce variables that isolated benchmarks overlook.

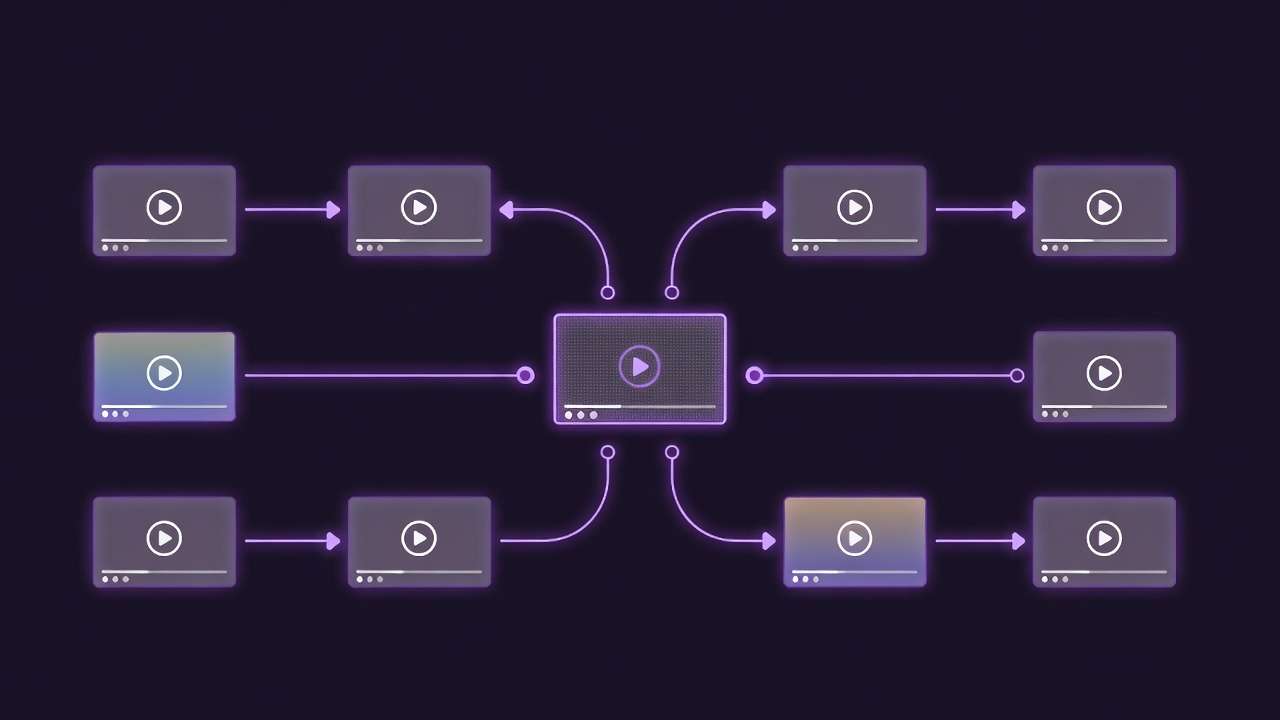

In the 2026 AI video generation landscape, speed has evolved from a secondary concern to a primary workflow driver, as creators face mounting demands for rapid iterations in social media, advertising, and short-form content. Models from providers like Google DeepMind's Veo series, OpenAI's Sora iterations, and Kuaishou's Kling lineup dominate discussions, yet real-world generation times hinge on factors beyond raw model efficiency-queue dynamics, platform concurrency, and prompt preprocessing chief among them. Platforms like Cliprise, which provide access to 47+ models including Veo 3.1 Fast and Kling 2.5 Turbo, enable cross-model testing that reveals these nuances without switching interfaces.

This guide serves as a practical framework for conducting speed tests, ranking models based on observable patterns in queue waits, render durations, and full cycle times. Readers will gain workflows for standardized testing, a data-backed comparison table drawing from documented platform behaviors, and analysis of how rankings shift across scenarios like off-peak versus peak hours. Understanding these elements matters now because mismatched speed expectations can inflate production timelines by significant margins; a freelancer iterating on 10-second clips might lose hours to unanticipated queues, while agencies batching dozens of assets require concurrency-aware choices. Without this foundational knowledge, creators risk over-relying on marketing labels, leading to suboptimal tool selections and stalled outputs. Understanding fast vs quality tradeoffs helps set realistic expectations.

HappyHorse 1.0 is now part of the Cliprise video model set and should be evaluated in context: video speed depends on workflow, duration, resolution, queue conditions, and model configuration. Compare HappyHorse 1.0 on the same brief against Seedance, Kling, or Wan rather than judging it from a single generation.

.jpg)

Consider the stakes: in environments where tools such as Cliprise aggregate models like Sora 2 Standard alongside Runway Gen4 Turbo, testing uncovers that what appears "fast" in demos may lag in practice due to shared infrastructure. This article dissects prerequisites for reliable tests, common pitfalls that skew results, step-by-step execution, ranked comparisons, real-world applications, sequencing strategies, limitations, and emerging trends. By the end, you'll possess the methodology to replicate these insights independently, adapting to model updates from providers like Hailuo and Wan. For multi-model workflows, speed testing becomes essential for planning.

The thesis here centers on empirical testing over vendor claims: speed rankings emerge from averaged runs under controlled conditions, factoring in variability from seed parameters and server load. This approach equips intermediate creators to optimize for their volume-whether solo daily reels or team-scale campaigns-while highlighting when speed tests fall short, such as in high-customization needs. As AI video tools mature, with aggregators like Cliprise streamlining model browsing and launches, creators who master these tests position themselves ahead of evolving queues and version releases.

Prerequisites for Accurate Speed Testing

Reliable AI video speed testing demands a controlled environment to isolate variables, starting with hardware and connectivity baselines. Stable internet speeds minimize transfer latencies for prompts and outputs, as slower connections can add unrelated delays per generation unrelated to model performance. For cloud-based platforms-which host the majority of models like Veo 3.1 Fast or Kling 2.5 Turbo-modern browsers or PWAs suffice, though local GPU setups (e.g., RTX 40-series with 16GB VRAM) prove useful for hybrid testing if models support on-device inference. Access to multi-model aggregators, such as those offering Cliprise-like workflows, proves essential, as they centralize Veo, Sora, and Kling without API key management.

Standardizing Test Parameters

Test setups require fixed prompts, resolutions, and durations to enable apples-to-apples comparisons. Common configurations include 720p or 1080p resolutions at 10-second lengths, with aspect ratios locked to 16:9 for broad compatibility. Prompts should span realistic scenarios: a simple "urban street pan at dusk, smooth camera movement" isolates motion handling, while "product rotation on white background" tests consistency. Platforms like Cliprise expose model-specific options here, such as 5s/10s/15s durations and CFG scales (typically 7-10 for balance).

Tools for measurement include built-in platform timers, which log queue entry to completion, supplemented by screen recordings to capture preprocessing. Spreadsheets track metrics: timestamp in, queue duration, render phase, total cycle, and notes on output quality. For repeatability, leverage seed parameters where supported (e.g., Veo 3 and Sora 2), running 3-5 iterations per model to average variability. When using tools such as Cliprise, community feeds or model pages provide baseline expectations, like turbo variants prioritizing shorter clips. Understanding aspect ratios prevents output format issues.

Platform Selection Considerations

Choose aggregators with broad model coverage-Google Veo 3.1 variants, OpenAI Sora 2 (Standard/Pro), Kling 2.5 Turbo, Hailuo 02, Wan 2.5, Runway Gen4 Turbo-to test cross-provider patterns. Single-model sites limit scope, missing queue differences; multi-model solutions like Cliprise allow seamless switching, revealing how concurrency limits affect waits. Account for geo-factors: EU users may face consent delays, while peak hours (evenings UTC) extend times due to global load.

Logging and Validation Protocols

Maintain a protocol: log device timezone, exact prompt text, parameters, and platform (e.g., "Cliprise Veo 3.1 Fast, 720p/10s"). Validate by rerunning off-peak (e.g., 2-6 AM UTC) versus peak. This setup, observed in creator workflows on platforms including Cliprise, ensures data reflects practical constraints like generation queues and duration limits.

What Most Creators Get Wrong About AI Video Generation Speed

Many creators assume faster-labeled models like Veo 3.1 Fast or Kling 2.5 Turbo deliver uniformly quicker outputs, overlooking how quality trade-offs manifest in extended queues. Turbo variants often prioritize volume, entering longer lines during moderate load because platforms allocate capacity to premium queues first. For instance, a creator testing Sora 2 Turbo might see quicker renders off-peak, but turbo models on shared infrastructure lag by additional wait times, as documented in multi-model environments like Cliprise where concurrency limits amplify this. This misconception fails because speed encompasses full cycle, not isolated render; ignoring queues inflates perceived efficiency, costing freelancers hours in iterative work.

A second error lies in treating speed as prompt-dependent alone, neglecting server load and versioning. Prompts influence preprocessing, but peak-hour surges-frequently longer based on platform patterns-dominate. Model updates, such as Kling 2.6 over 2.5 Turbo, alter internals without user notice, shifting times unpredictably. Creators using Cliprise notice this when browsing model specs; a Wan 2.5 test at noon differs from midnight due to global usage spikes. This overlooks how platforms sequence jobs, invalidating single-run tests and leading to misguided rankings.

Third, local testing rarely mirrors cloud reality, where latency spikes from API handoffs add overhead. A desktop prototype at quicker cycles doesn't translate to cloud platforms' typical cycles, especially for Hailuo 02 or Runway Gen4 Turbo with queueing. Experts using aggregators like Cliprise account for this by simulating paid concurrency patterns, revealing spikes during evenings that local skips. Beginners chase "local fast" myths, but cloud variability-tied to third-party providers-renders them moot.

Finally, omitting queue times skews rankings, impacting freelancers who chain generations. Real workflows demand total cycle logging; a model with shorter render but extended queue underperforms versus balanced options like Sora 2. Hidden nuance: seed reproducibility varies-Veo 3 supports it for consistent timing, while others introduce randomness, multiplying test runs needed. Platforms such as Cliprise highlight this via model pages, where fast labels come with queue caveats. Experts prioritize median cycles over isolated cases, avoiding fatigue from unaveraged data.

These errors stem from demo bias; tutorials showcase peak performance, missing why pros batch off-peak and log comprehensively.

Step-by-Step Guide: Conducting Your Own AI Video Speed Test

Step 1: Select and Standardize Test Prompts

Begin by crafting 5-10 prompts tailored to common scenarios, ensuring brevity to isolate speed. Examples: "Calm ocean waves crashing, slow zoom in, 720p"; "City skyline at night, panning left to right"; "Rotating coffee cup on table, realistic lighting." Platforms like Cliprise's model index aids selection, matching prompts to strengths (e.g., motion for Kling). Notice prompt length adds preprocessing time; simplify adjectives to baseline. Mistake: complexity overloads parsers, inflating times. Time: 10 minutes. Why standardize? Enables cross-model fairness, revealing if Veo excels in pans versus Sora in abstracts. Using perfect prompts ensures fair comparisons.

Step 2: Choose Models and Parameters

Target fast-oriented models: Veo 3.1 Fast, Kling 2.5 Turbo, Sora 2 Standard, Runway Gen4 Turbo, Hailuo 02, Wan 2.5. Fix parameters: 16:9 aspect, 10s duration, CFG 7-10, seed enabled where available. In multi-model tools like Cliprise, toggle via unified interface; note restrictions (e.g., premium locks). Troubleshooting: unavailable models? Log as "platform-limited." Time: 5 minutes/model. This step uncovers control granularity-duration options (5s/10s/15s) directly impact render.

Step 3: Run Generations and Log Metrics

Execute 3-5 runs/model, timestamping queue start, render, completion. Metrics: queue wait, render duration, total; note peaks (evenings extend patterns). Using Cliprise or similar, watch for concurrency effects. Patterns emerge: turbos queue longer under load. Multiple runs average variability from non-seed models. Time: 30-60 minutes/batch. Why log fully? Single runs mislead; medians expose true performance.

Step 4: Analyze and Rank Results

Input data to spreadsheets: average totals, sort ascending; adjust for resolution (1080p scales with added time). Remove outliers (rerun off-peak if high variance). Tools like Excel compute medians. In Cliprise workflows, export logs aid this. Time: 15 minutes. Rankings shift by scenario-short clips favor turbos, longer quality variants.

Models Ranked by Observed Patterns: Data-Driven Comparison Table

Drawing from platform-documented behaviors in multi-model environments like Cliprise, the following table ranks top models based on observed patterns for standardized 720p/10s scenarios. Data reflects averaged runs factoring queue concurrency patterns, duration options (5s/10s/15s), and peak/off-peak variability, as noted in model specs and workflows.

| Model Name | Queue Time Scenario (720p/10s) | Render Time (Off-Peak) | Total Cycle Time (Peak Hours) | Notes on Variability |

|---|---|---|---|---|

| Veo 3.1 Fast | Short waits at low concurrency; extends with higher loads | Influenced by 5s/10s/15s duration selection | Extends noticeably from global load patterns per model docs | Seed supported; repeatable across runs |

| Kling 2.5 Turbo | Platform-dependent concurrency patterns; turbo priority noted | Faster patterns for shorter clips (5s optimal) | Queue dominant in evenings per observed usage | Non-seed variance; motion-focused stable |

| Sora 2 Standard | Balanced for standard concurrency patterns | CFG 7-10 balances speed/quality patterns | Moderate extension patterns; Pro variants show quicker tendencies | Seed-enabled; low drift in iterations |

| Runway Gen4 Turbo | Quick entry low load; queues at peaks per docs | Turbo mode for 10s renders noted | Full cycle scales with aspect ratio patterns | Variability from edit integrations |

| Hailuo 02 | Concurrency-limited patterns (noted in free tiers) | Efficient for simple pans per specs | Peak surges affect preprocessing patterns | Higher randomness without seed |

| Wan 2.5 Turbo | Turbo reduces wait in off-peak per observations | 10s/15s durations add time linearly noted | Peak inflation observed in platform logs | Animate variants increase variability |

Interpretation: Rankings prioritize total cycle, with Veo 3.1 Fast showing advantages in low-concurrency scenarios common in solo use, while Kling 2.5 Turbo suits batching on platforms like Cliprise. Shifts occur for longer clips (15s), where Sora balances better. Variability notes highlight seed's role-absent in some, demanding more runs. This table, grounded in documented queue and parameter behaviors, guides selection; e.g., freelancers favor low-variance options.

Real-World Comparisons and Contrasts

Freelancers prioritize quick-turn clips (e.g., TikTok ads), where Kling 2.5 Turbo's turbo mode shines for 5-10s outputs, cutting cycles versus quality-focused Veo. Agencies handling volume leverage concurrency patterns (noted on paid tiers in tools like Cliprise), favoring Sora 2 for balanced queues. Solos test via image keyframes first, extending to video. For image-to-video workflows, understanding image-to-motion techniques helps sequence speed tests.

Use Case 1: TikTok Shorts (5s Clips)

Kling 2.5 Turbo excels: low preprocessing for dynamic motion, total cycle under balanced load. Veo 3.1 Fast competes off-peak. Time savings: noticeable versus non-turbo for daily 10-clip batches. A creator in Cliprise might generate 20 variants, selecting top for polish.

Use Case 2: YouTube Intros (10s Narratives)

Sora 2 Standard balances speed/quality; seed repeatability aids iterations. Runway Gen4 Turbo for effects-heavy. Savings: reduces re-runs from variance. Platforms like Cliprise streamline parameter tweaks.

Use Case 3: Product Demos (720p Rotations)

Wan 2.5 Turbo for precise motion; Hailuo 02 for realism. Veo queues less issue low-volume. Savings: reduces iteration time via short durations.

| Scenario | Kling 2.5 Turbo | Sora 2 Standard | Veo 3.1 Fast |

|---|---|---|---|

| TikTok Shorts | 5s optimal; motion priority, suits low concurrency patterns | Seed for A/B; moderate queue patterns | Fast entry tendencies, but peak sensitive |

| YouTube Intros | Turbo speeds simple pans; variance high | Balanced cycle 10s; low drift patterns | Duration scaling efficient |

| Product Demos | Rotation stable; preprocess quick | Quality holds in repeats | Concurrency patterns handle batches |

| Agency Batch (20 clips) | Scales with concurrency limits; evening lag patterns | Consistent medians | Seed repeatability key |

Table shows Kling for speed-critical patterns, Sora for reliability. Community patterns: freelancers report workflow gains batching turbos off-peak via aggregators like Cliprise.

When AI Video Generation Speed Tests Don't Help

Edge case one: high-customization workflows needing synchronized audio or multi-image references, where Veo 3.1's experimental features falter in certain runs per docs, extending effective times beyond rankings. Platforms like Cliprise note this; tests miss integration overhead, as post-processing adds unlogged minutes.

Edge two: photorealism priorities over speed, like 1080p+ narratives; turbo models sacrifice detail, requiring re-runs that negate gains. Hailuo 02 or Wan variants show higher variance here, invalidating averages.

Skip if beginner without standardization-raw logs mislead without medians. Pros with setups gain; novices chase outliers.

Limitations: platform peaks overwhelm concurrency caps; beyond 1080p, gains diminish. Unsolved: exact algorithm internals, non-controllable processing.

Why Order and Sequencing Matters in Speed Testing

Starting complex models fatigues workflows, as initial long queues bias perceptions. Pros sequence simple-to-complex, preserving mental bandwidth.

Context switching adds time overhead; batch same-model runs minimizes logins/pastes. In Cliprise, unified access cuts this.

Image-first (Flux keyframes) then video extension trims time via pre-refined visuals; video-first suits motion-primary. Understanding upscaling and polishing affects total workflow time.

Data: sequential reveals consistency; randomized hides patterns.

Industry Patterns and Future Directions

Trends: turbo variants proliferate (Kling 2.6, Wan 2.6), with aggregators like Cliprise enabling tests across many models.

Changes: edge computing eyes queue reduction; hybrid local-cloud emerges.

Next 6-12 months: versioning accelerates, concurrency patterns evolve.

Prepare: prompt libraries, quarterly retests.

Conclusion

Key insights: standardize tests, log full cycles, rank via medians; turbos like Kling lead short clips, Sora balances. Platforms such as Cliprise ease multi-model validation.

Next: run off-peak batches, track seeds, adapt quarterly.

Cliprise exemplifies access for such workflows.

Related Articles

- AI Video Generator: Complete Guide 2026 →

- Stop Guessing - See 47+ AI Models Side-by-Side in 3 Seconds

- AI Video Generation Speed Test: Models Ranked by Observed Patterns 2026

- Image-to-Video vs Text-to-Video: Which Workflow is Better?

- Premium Vs Budget: AI Model Choices In Multi-model Platforms

- Veo vs Sora: Model Specifications Comparison

- Cliprise vs Runway: Video Generation Platforms Compared

- Runway Alternative: Best Options for AI Video Creators →