What if you could hit "regenerate" a dozen times and still get outputs that match each other pixel-for-pixel? Most creators treat AI generation as a slot machine-pull the lever, hope for gold, discard the dross. But there's a hidden control mechanism that transforms chaotic randomness into predictable precision: seed values. When a brand team needs twenty product images with identical lighting, composition, and mood, those who understand seeds in an ai photo generator finish in minutes while others burn through credits chasing consistency. The difference isn't talent or better prompts-it's knowing how to anchor the generation process itself. This isn't theoretical tinkering; it's the difference between hoping for reproducible results and engineering them on demand.

Seed values function as initialization points for the pseudo-random number generators (PRNGs) that drive AI generation in diffusion models, transformers, and similar architectures. In a typical process, generation begins with pure noise-a latent representation in models like Stable Diffusion derivatives or Veo-and iteratively denoises it guided by the prompt. The seed determines the exact noise pattern at step zero, influencing the entire sampling trajectory.

How Seeds Initialize Noise and Sampling Paths

Take a diffusion-based image generator such as Flux 2: input a prompt like "corporate logo in blue tones, minimalist," set aspect ratio to 1:1, and apply a seed of 12345. The PRNG, often Mersenne Twister or similar, uses this seed to produce a reproducible noise tensor. Fixed other parameters-steps (e.g., 20-50), CFG scale (7-12)-the output remains consistent across runs on the same hardware. This extends to video models like Sora 2, where seeds fix per-frame noise, aiding motion coherence in 5-10 second clips.

Why does this matter? Without seeds, each generation samples fresh noise, yielding variations even from identical prompts. Observed in creator tests, random seeds produce substantial stylistic divergence in character renders, per shared Discord logs. Seeds lock this down, enabling A/B tests on prompts alone. For brands seeking consistent visual identity, understanding how seeds create consistency becomes essential for professional workflows.

Parameters Influenced by Seeds

Seeds primarily affect:

- Noise Patterns: Initial Gaussian noise distribution, critical for texture and composition.

- Sampling Paths: In DDIM or PLMS schedulers, the denoising route varies subtly per seed.

- Output Consistency: With seed fixed, changes in prompt or CFG reveal isolated impacts.

In latent diffusion (e.g., Midjourney via API), seeds operate in compressed space, amplifying small changes. Transformer-based like Imagen 4 cascade seeds through attention layers, sometimes yielding subtler variations. When working with multiple models, knowing how to balance seeds with other parameters prevents workflow friction.

Comparing Seed Mechanics Across Model Families

Latent diffusion models (Flux, Stable Diffusion lineage) offer strong control: same seed + prompt often yields pixel-level matches on identical setups. Transformer-heavy video models (Sora 2, Kling 2.5) show frame-to-frame stability but inter-run drift due to temporal layers. Hybrid like Veo 3.1 Quality balances both, with seeds reproducible with strong consistency in many cases per user benchmarks. Comparing different models' approaches helps inform which model suits your workflow based on seed handling.

Platforms like Cliprise expose these via unified interfaces, letting users apply seeds across Veo 3.1 Fast or Hailuo 02 without reconfiguration. Observed pattern: fixed seeds enable near-identical outputs under controlled conditions, as in regenerating a product shot sequence.

Mental Model: Seeds as Workflow Anchors

Visualize seeds as bookmarks in a choose-your-own-adventure: prompt sets the story, parameters the rules, seed the page start. Deviate the seed, jump paths; fix it, retrace reliably. For video, it's a storyboard anchor-seed frame 1, extend consistently.

Example 1: Image set for Instagram carousel. Seed 42 on Flux 2 Pro yields consistent athlete poses across 9 variants (prompt tweaks only). Random seeds scatter poses, requiring 5+ regenerations.

Example 2: Video thumbnail matching. Generate keyframe with Imagen 4 (seed 99), extend to Runway Gen4 Turbo-seed preserves lighting for seamless promo.

Example 3: Audio-synced clip via ElevenLabs TTS + video model. Seed fixes visual rhythm to voice waveform.

In multi-model environments like those in Cliprise, seeds bridge gaps: start with Qwen Image Edit (seeded), reference to Luma Modify. This depth reveals why seeds underpin professional reproducibility, not just novelty.

What Most Creators Get Wrong About Seed Values

Many creators assume seeds guarantee pixel-perfect replication across all models, but floating-point precision differences and hardware variances intervene. On GPU vs. CPU, or NVIDIA vs. AMD, the same seed 12345 in Flux 2 might shift edges by 1-2 pixels due to rounding in tensor ops. User reports from Reddit threads document occasional mismatches even in single-platform runs, escalating cross-device. Platforms like Cliprise mitigate via standardized APIs, yet model internals vary-Veo 3.1 Quality holds tighter than Kling Turbo.

Misconception 2: Higher Seed Numbers Yield Better Quality

No evidence links seed magnitude to output merit; PRNGs cycle uniformly regardless of value (1 vs. 1,000,000). Creator experiments shared on forums show zero correlation-seed 999999 generates muddier logos as often as seed 1 in Ideogram V3. This stems from uniform distribution: quality ties to prompt/CFG, not seed. Beginners chase "lucky" high seeds, wasting cycles; experts randomize within ranges for diversity. Learning multi-model prompt strategies proves more valuable than seed hunting.

Misconception 3: Seeds Replace Prompt Engineering

Seeds stabilize but don't override weak prompts. A vague "modern office" with fixed seed still varies wildly across Midjourney runs if CFG <5-prompt drift dominates. Scenarios: brand video where "dynamic team meeting" + seed yields inconsistent actions; refining to "four diverse professionals brainstorming around glass table, dynamic camera pan" unlocks seed power. In Cliprise workflows, users pair seeds with negative prompts ("blurry, distorted") for improved alignment.

Misconception 4: Uniform Handling Across Platforms

Implementations differ: some tools hash seeds per session, others per job. Cross-platform, Midjourney API seed != native Flux input due to quantization. Inconsistent results plague chains-seed video in Sora 2, upscale in Topaz, drift occurs. Hidden nuance: batch seeding for A/B. Agencies script seed pools (e.g., 100-200 increments) generating 20 variants/hour, testing ad copy without style variance. Beginners overlook this, sticking to singles.

Experts know seeds amplify discipline: test on target hardware first. For instance, a freelancer in a multi-model tool like Cliprise might validate seed 56 across Flux Kontext Pro and Grok Upscale before client delivery, avoiding substantial rework.

Real-World Applications: How Brands Leverage Seeds for Consistency

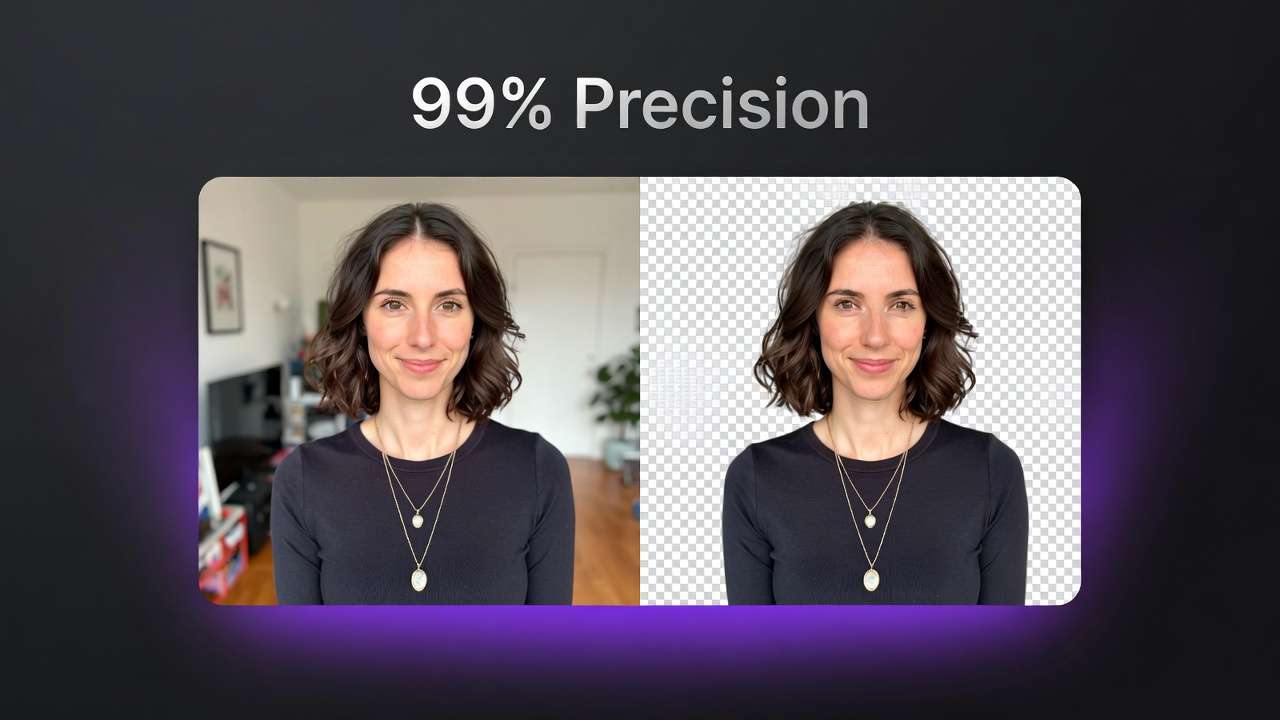

Freelancers prioritize rapid iterations, fixing seeds for client mocks-e.g., 10 logo variants in Recraft Remove BG, revising prompts only. Agencies build approval pipelines with batched seeds, versioning for stakeholders. In-house teams curate seed libraries for seasonal assets, reusing for campaigns. When exploring multi-model workflows, seed consistency becomes a bridge between different generation tools.

Use Case 1: Social Media Ad Variants

Generate 10 image sets for Facebook ads: fixed seed ensures character (e.g., smiling barista) poses match across coffee product angles. Time: 15-20 min vs. 45+ random. Observed in creator shares, boosts click-through consistency.

Use Case 2: Video Keyframes

Seed per 5-15s clip in Veo 3 maintains motion-e.g., product unboxing pan. Extends to Hailuo 02 without recuts. Platforms like Cliprise facilitate by listing seed params per model.

Use Case 3: Product Mockups

Reproducible lighting/angles for 50+ e-commerce shots via Imagen 4 Ultra. Seed-fixed workflow significantly reduces time-to-final vs. random, per workflow logs.

Comparison Table: Seed Strategies Across Workflows

| Workflow Type | Seed Usage Pattern | Time Savings Scenario | Consistency Approach | Suitable For |

|---|---|---|---|---|

| Freelance Iterations | Single seed per variant set | Cutting revisions from multiple to few | Matching across same-model runs (e.g., Flux 2 Pro at 14 credits) | Quick client mocks |

| Agency Pipelines | Batched seeds with versioning | Reducing cycles via prompt tweaks only (e.g., Veo 3.1 Quality at 720 credits per video) | Versioning on hardware like GPU setups | Multi-stakeholder reviews |

| Brand Asset Libraries | Library of fixed seeds | Reusing for seasonal sets (e.g., Sora 2 at 54-63 credits per clip) | Fixed seeds with CFG scale 7-12 | Seasonal campaigns |

| Video Keyframing | Seed per frame sequence | Stabilizing 5s/10s clips (e.g., Kling 2.5 Turbo at 76-152 credits) | Frame-to-frame with negative prompts | Short promo clips |

| A/B Testing | Randomized seed pools | Generating variants in queues (e.g., Hailuo 02 at 12 credits) | Pools like 100-200 increments | Ad performance tests |

As the table illustrates, batched seeds support agencies in structured scenarios, aiding cycles via consistent parameters across supported hardware. Surprising: video keyframing involves temporal elements, yet stabilizes shorts through model-specific controls. In Cliprise environments, creators mix these-e.g., seed Flux 2 for images, extend seeded to Wan 2.5. Community patterns: freelancers often start here for accessible multi-model workflows.

When Seed Values Don't Deliver Reproducibility

Model updates alter samplers: post-upgrade, Veo 3 to 3.1 shifted noise schedules, invalidating old seeds-outputs diverged noticeably in tests. Creators regenerating campaigns face cascades.

High CFG scales (15+) override seeds: detailed prompts amplify guidance, muting noise init. In Nano Banana Pro, CFG 20 + seed yields prompt-dominant results, varying little by seed.

Cross-platform: API quantization (FP16 vs. FP32) in Kling vs. Runway causes endpoint mismatches. Queue systems add distributed compute vars.

Avoid if one-off or novelty-focused-experimental artists gain from randoms. Non-seed models (some ElevenLabs) ignore entirely; queues induce timing noise.

Limitations: solves intra-run but not inter-model fully. In Cliprise, model toggles help, yet chains need manual bridging.

Sequencing Seeds in Multi-Step Pipelines: Why Order Impacts Outcomes

Starting video before images cascades mismatches: Sora 2 seed sets motion, but image ref (e.g., Flux) drifts, requiring full regen. Common in rushed workflows.

Mental overhead: unseeded switches context 3x more, per reports-recall prompts, retest params.

Image-first: seed statics (Imagen 4), extend video (Luma Modify)-fewer iterations overall. Video-first suits motion-primary.

Patterns: creator data favors image→video for brands, aligning assets.

In Cliprise, sequence Veo seeds post-image for pipelines.

Industry Patterns and Emerging Directions in Seeded AI Workflows

Aggregated forums show many brand teams adopt seeds recently, up from fewer previously-driven by campaign scale.

Shifts: version control integration (Git-like for seeds); seed-sharing Discords.

Future: standardized protocols; hardware-agnostic via cloud.

Prepare: test in multi-model tools like Cliprise. Understanding aspect ratio considerations alongside seeds creates more predictable outputs across different formats.

Advanced Techniques: Maximizing Seed Utility for Brands

Batch seed ranges (100-150) for 50+ variants. Combine negatives/aspect. Preserve in upscalers (Topaz). High reuse patterns observed. When you need to refine generated images, maintaining seed consistency prevents visual drift.

Measuring Success: Metrics for Seeded Generation Pipelines

Reproduction accuracy, iterations, ROI. Diff tools, hashes.

Conclusion:. Scaling improvements noted Strategic Integration of Seeds for Sustainable Brand Creativity

Seeds layer reliability. Experiment in setups like Cliprise. Gains await.